Advancing Cloud Computing’s Final Frontier

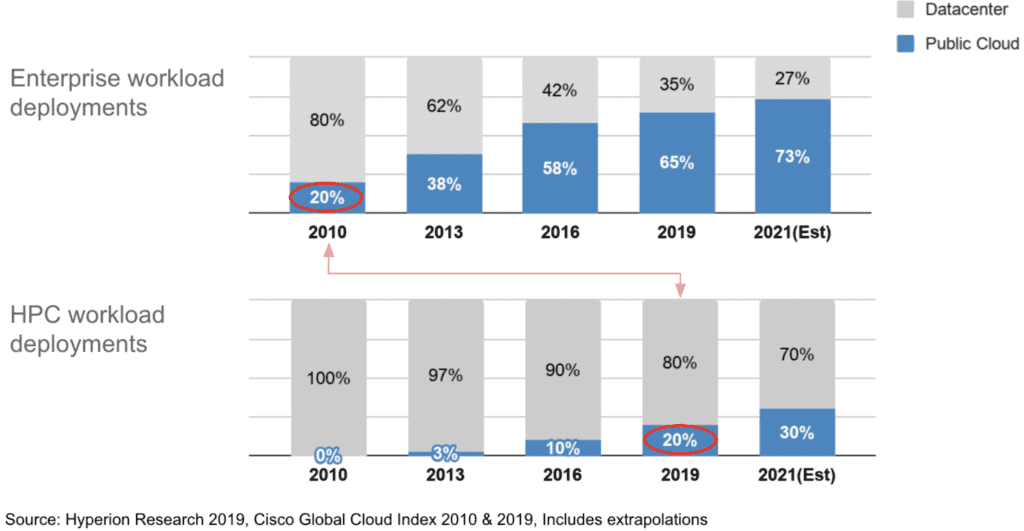

Public cloud computing’s momentum is undeniable. In less than a decade, businesses went from debating whether to use public cloud, to running the majority of workloads on public cloud (58% as of 2016 according to Cisco). Cisco expects this number to jump to 73% by 2021. Repatriation to data centers (private clouds) occur but are rare exceptions. Initially, many found justification for workload to stay on prem, but as we heard in Star Trek, “now they are all Borg.”

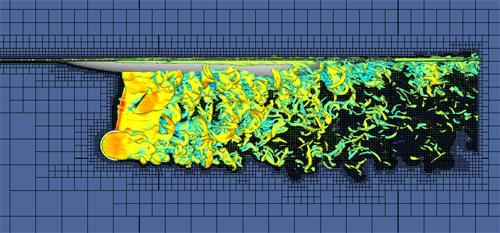

There’s been one holdout to this trend, however, and that’s high performance computing (HPC). HPC is used by engineers to create the planes, cars, medicine, electronics, and AI-enabled services we use every day. Scientists use HPC to power weather, climate and astrophysics simulations that push the boundaries of our knowledge. Example techniques they use include computational fluid dynamics (CFD), electronics design automation (EDA), molecular dynamics calculations, and artificial intelligence model training.

As of 2019, only 20% of HPC workloads are running in the cloud, according to Hyperion Research. Unlike cloud native web applications that run on commodity compute, HPC infrastructures are specialized with a tremendous amount of cores & memory, as well as high-speed interconnects between machines. This specialized software+hardware architecture has been an inhibitor to HPC’s transition to public cloud.

HPC Cloud is Already Here

Although HPC workloads are only 20% in public cloud, that’s 2X the number from just two year ago. That’s explosive growth for enterprise IT, and it’s driven by several factors. First, the companies that have been using simulations to engineer new products are just starting to hit their stride and are expanding their use cases – but now with a cloud-first mindset. Second, from AI to digital twins, mainstream enterprises are also jumping into HPC – driving growth of the market overall. Third, Independent Software Vendor (ISV) that specialize on simulation software – the primary workload of HPC infrastructures – are finally embracing cloud deployments, along with consumption-based licensing models of their software. And lastly, cloud providers, recognizing the growth of the HPC market, are pushing hard to deliver new specialized HPC infrastructures.

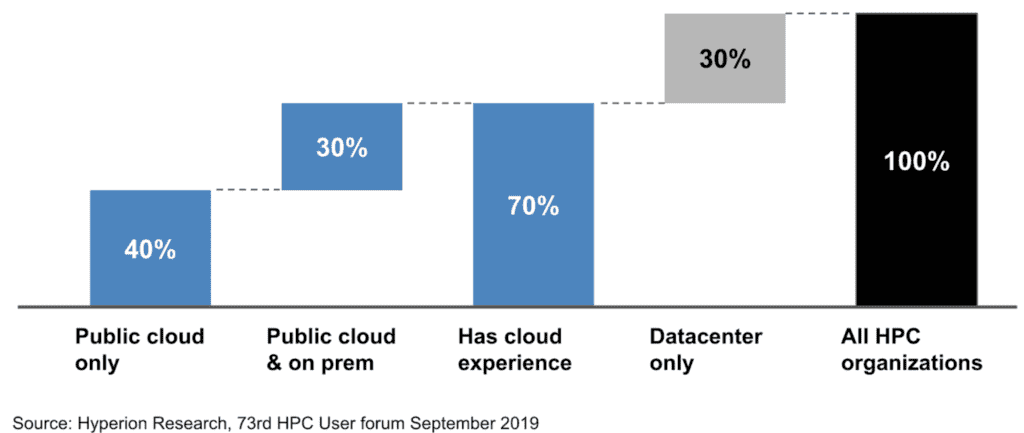

From an organizational standpoint, HPC teams are clearly dipping their toes into public cloud, if not jumping in entirely. Of all organizations that run HPC workloads, 70% are trying at least some workloads in the cloud, and 40% are using public clouds exclusively.

Steps to HPC Cloud’s Success

Given the unique nature of HPC infrastructure, simulation software, and its users, HPC’s transition to cloud is likely to be different than those of traditional workloads like CRM, ERP, and home-grown applications. To see why it’s useful to look at HPC in terms of IaaS, PaaS, and SaaS-level services, and what roles cloud providers could play.

All leading cloud providers already deliver HPC infrastructures (IaaS) – with specialized VM’s or physical servers, along with high-speed interconnects. While it’s a great step forward, that’s not enough to move most HPC workloads to public clouds. Unlike traditional monolithic applications, which can run encapsulated in VM’s in public cloud just as easily as on prem, running simulation software on HPC infrastructure requires specialized HPC engineering expertise (e.g., cluster design optimized for a particular simulation). So HPC IaaS adds flexibility & efficiency to the infrastructure, but overall we haven’t reduced complexity. The dynamic nature of public cloud infrastructures arguably adds complexity.

The cloud PaaS approach is not a likely model for HPC in the cloud, given the proprietary nature of software used. New platform technologies like Kafka and Kubernetes saw rapid adoption by application developers for building home-grown applications. Cloud providers quickly launched managed services of these open source technologies, which are applicable across nearly all industries. Examples include Amazon MSK (managed Kafka) and Azure ASK (managed Kubernetes). On the other hand, most HPC workload users are researchers, engineers and mathematicians – generally not software developers. An electronics designer running EDA simulations or an aerospace engineer running CFD simulations is an end user of industry-specific proprietary software, not a developer eager to try the latest trending open source tools on GitHub.

HPC in the Cloud: Going beyond IaaS and PaaS

This takes us to SaaS. How do we provide simulation software-as-a-service to engineers & scientists to create new world-changing products? Every industry uses specific types of simulations, each with a distinct set of leading ISVs delivering packaged software solutions. These mature and sophisticated applications are optimized for running on specific HPC clusters. Cloud computing is not their area of focus, and most will not just rebuild their software as a cloud service like Microsoft did with Office/Office365. Cloud providers, on the other hand, are not experts in simulation software across these industries, and the simulation professionals are proficient and vested in the software suites they are already using.

This means for customers (or users) to run simulations on the public cloud, organizations need to bring together readily-available cloud provider HPC infrastructures, with a broad set of simulation software, and operationalize it as a service. This worthwhile and challenging undertaking requires several steps. First, they must manage the dynamic infrastructure made available by the cloud providers – this means deployment, operation and termination of the infrastructure against best practice HPC cluster designs. Second they must perform lifecycle management of simulation software suites, including their system requirements, and deploying them on demand. Then, they must manage the licensing of simulation software consumed against dynamic HPC infrastructures. Lastly, they must make appropriate cost/performance tradeoffs in each of the above.

The only way to do this is with automation – and this is where Rescale comes in.

Enabling the HPC in the Cloud

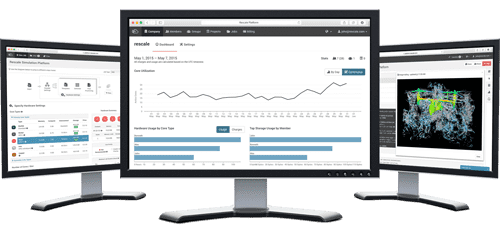

Rescale ScaleX is a cloud service that delivers SaaS-like simplicity for running HPC workloads on the world’s largest HPC cloud network – with complete architectural control. This means engineers and researchers can use any simulation software or ML tool (e.g., ANSYS, Cadence, Siemens, TensorFlow) on any of the major cloud provider’s HPC infrastructures (e.g., AWS, Azure, GCP) with turnkey simplicity.

Rescale is enabling HPC in the cloud, from end users, to ISVs to cloud service providers.

Organizations that use Rescale see several benefits. First is much lower HPC infrastructure and simulation software costs per simulation under an OPEX model. Second is dramatically faster time to market of new products by bringing cloud flexibility to the simulation software engineers are already familiar with, and improving collaboration. Third is expanding the solution space, enabling engineers to go beyond validating designs to running exploratory simulations against a broader set of options. Lastly, comprehensive visibility of operating costs across the entire HPC stack, including software licensing, infrastructure spending, and consumption by individuals and teams.

Beyond end user organizations, ISVs across industries are using Rescale to automate cloud delivery of their software. Cloud service providers look to Rescale to drive demand to their HPC infrastructures.

For more information, visit rescale.com.