Big Compute Podcast: Accelerating HPC Workflows with AI

In this Big Compute Podcast episode, Gabriel Broner hosts Dave Turek, Vice President of HPC and Cognitive Systems at IBM, to discuss how AI enables the acceleration of HPC workflows.

Register for future Big Compute Podcast episodes

Overview and Key Comments

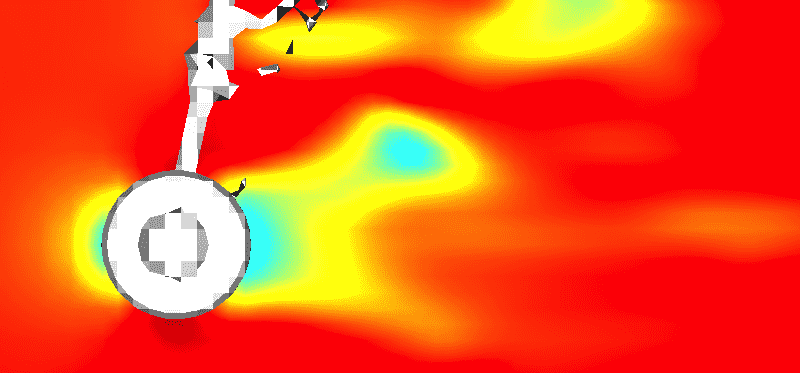

HPC has traditionally relied on simulation to represent the real world. Over the last several years AI has had significant growth due to innovation, growth in compute capacity, and new architectures that have enabled it. HPC can benefit from AI techniques. One area of opportunity is to augment what people do in preparing simulations, analyzing results and deciding what simulation to run next. Another opportunity exists when we take a step back and analyze whether we can use AI techniques instead of simulations to solve the problem. We should think about AI as increasing the toolbox HPC users have. We should learn about them and incorporate them, as in the future the separation between HPC and AI may simply not exist.

HPC + AI accelerate workflows

Regarding how HPC and AI can help address problems better, Dave Turek says:

“We talk about HPC and AI as if they were two different things. I like to talk about the amalgamation of these two fields. They need to be brought together under one umbrella. Somehow we have lost sight of the difference between algorithms and workflows. It’s important from a commercial perspective to tackle the workflow, not parts of the workflow, or you risk working on the margin. Some years ago I was working with a company in the oil industry. After a day of discussing algorithms we realized that the biggest problem they faced was how to sort data faster. They had a workflow perspective of the problem. If I had solved simulation in zero time, I would have reduced only 5-8% of the total time. If I had sorted data faster by a factor of 10, I would have cut down time by 40-50%. Once you embrace the notion of workflow you see many additional domains where AI can help.”

Growth in AI

“Innovation is driving the uptake in AI. We are in an embryonic state. On a timescale, we are at t=0.001. People are doing experimental work, but there will be a burgeoning of deployment very shortly. Some areas bring a series of ethical issues, like autonomous driving, while other areas, like manufacturing, are devoid of ethical considerations so there is less of a barrier for acceptance and we will see significant growth.”

Intelligent Simulation

“Intelligent simulation is the incorporation of AI methodologies to make simulation better. If you think about the problem of executing simulations: you have a hypothesis, run simulations, analyze results and recalibrate simulations to run again with some refinements. You do that to run the minimum number of simulations. That human process can be augmented with the introduction of AI to analyze results and decide what the next simulations will be. AI can become an effective director of that work.”

Results

“We have seen stunning results. We have clients who do millions of simulations per day. With the incorporation of AI we have seen examples where we have been able to cut down more than ⅔ the number of simulations to run. These results are replicable while not at this point generalizable across different problem types. We have gotten results as good as a 95% reduction but that should not be the overall expectation.”

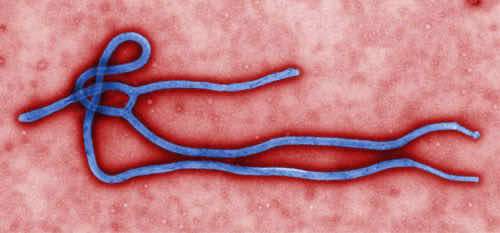

“In the pharmaceutical domain, we have used this approach to look at potential drug compounds for efficacy against disease vectors, and we were able to reduce from 20000 compounds for evaluation to 1000. In the domain of chemical formulation, when analyzing combinations of compounds to produce a molecular outcome, we reduced the amount of computing by more than 60%. These approaches are now being implemented operationally by the clients we have been working with.”

Cognitive Discovery

“80% of the workflow is about data: how you get data, how you organize it, what is the architecture of data. Intelligent simulation is a strategy of augmentation. Cognitive discovery is a strategy of replacement. Let’s forget the idea of simulation and use AI to solve HPC problems. Could I solve the same problems without simulation?”

“We developed tools that ingest data, create information, architect it, automatically generate neural nets. We are using these tools to create access to public information that goes beyond what humans are able to analyze. As an example in 2018, there were 450,000 scientific papers just in material sciences alone.”

AI levels the playing field

“The data scientists are in the urban areas of the United States. If I am located somewhere else, it’s a problem. How do you deal with the shortage of skills? How much of this activity can we automate to make the accessibility of AI more egalitarian?”

“By automating the process from data to inference, and providing access to the collective knowledge of know-how, small companies can behave like big companies. Historically markets and economics have talked about the benefits of scale. With AI everybody can compete in a level playing field where small and midsize companies are punching above their weight: a 100 person company can now compete with a 10,000 person company.”

Access in the cloud

“With IBM having partnered with Rescale for HPC in the cloud all the techniques we talked about are available in the cloud: IBM Q offers access to Quantum computing; IBM React/IBM RXN offers access to a cognitive discovery system built in Zurich to assess the outcomes of chemical reactions.”

The Future and how our jobs will change

“In 5-10 years this will be a significant element of the analyst toolkit. For us, it’s a matter of embracing a new set of tools. In HPC people have developed new tools over the course of time. For example, the development of MPI facilitated the use of parallel programming. It’s not any different or more difficult here. The emerging frameworks have enabled us to use these new tools. Study, learn them, participate. This will give rise to new opportunities.”

Dave Turek

Dave is Vice President for HPC and Cognitive Systems at IBM. Over the years at IBM Dave has been responsible for Exascale Systems, high performance computing strategy, helped launch IBM’s Grid Computing business, and started and ran IBM’s Linux Cluster business. As a development executive he had responsibility for IBM’s SP supercomputer program as well as the mainframe version of AIX and other Unix software. In that capacity he orchestrated the initial IBM development effort in support of the US Accelerated Strategic Computing Initiative at Lawrence Livermore National Laboratory.

Gabriel Broner

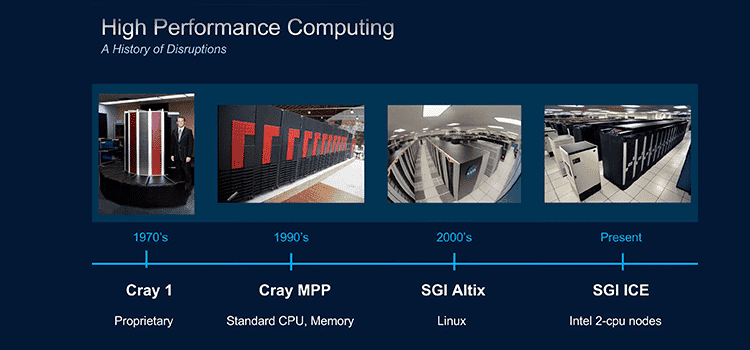

Gabriel Broner is VP & GM of HPC at Rescale. Prior to joining Rescale in July 2017, Gabriel spent 25 years in the industry as OS architect at Cray, GM at Microsoft, head of innovation at Ericsson, and VP & GM of HPC at SGI/HPE.