Modernize your SPDM strategy with Rescale and the Cloud

Simulation environments face unique challenges: fragmented software and hardware, a large simulation data set, and a complex execution process. Data is isolated and managed using technology 10+ years old. For example, simulation data is managed on the engineering desktop, or at best, through a shared NAS relying heavily on naming conventions. Files are shared with remote users via email or FTP. This hurts simulation expert productivity and the ability to maintain traceability. However, recent advances in cloud technologies have made it possible to modernize Simulation Process & Data Management (SPDM).

OVERVIEW

Simulation Process & Data Management (SPDM) is a technology trend that has existed since the year 2000, aiming to build a simulation method, provide traceability and increase productivity through automation. “Despite the successes achieved with SPDM, the adoption of information systems to manage simulation data by simulation engineers is still very low at 1%-2%,” according to NAFEMS. Three major legacy inhibitors include a) lack of openness: commercial solutions are proprietary and lack standards; b) disruption to existing systems: implementing requires major disruptions to the user experience, IT environment, and simulation processes; and c) time to deploy.

SOLUTION

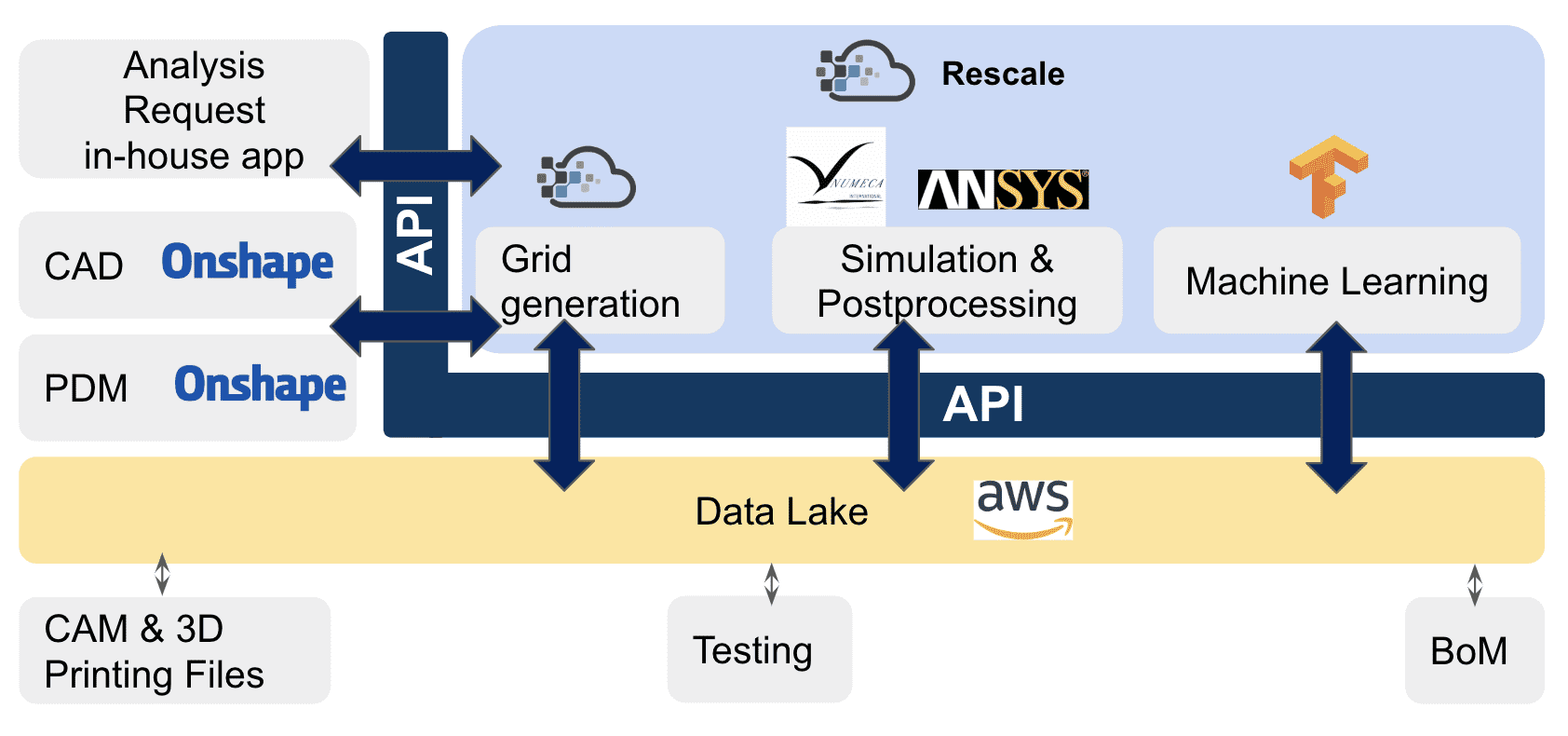

Because of recent advancements in cloud technology, IT leaders can now rapidly build their SPDM stack, avoiding vendor lock-in and minimizing disruption to mission-critical applications. A best-of-breed cloud SPDM architecture eliminates manual integration and incorporates cloud-based data stores or data lakes, modern workflow management tools, support for a wide range of commercial applications and license management, and a fully managed stack for automating how these tools integrate. This stack might like the below:

Figure 1: Example of cloud SPDM solution stack

Rescale offers an integral component to help integrate this best-of-breed stack, and focuses on addressing all the SPDM challenges related to simulation process execution. The ScaleX platform transforms a difficult, complicated and inconvenient HPC experience into something easy to maintain, simple to integrate, and intuitive to use. This provides an abstraction layer that automates data movement, process orchestration, and dashboarding across application and execution environments. It lowers barriers to entry for engineers to leverage HPC resources and captures all the precious simulation information without effort from the users.

In this article, we will explore the following technology enablers that make cloud SPDM modernization possible:

- Full-stack simulation workflow formulation

- Automatic HPC data capture

- Globally optimized architecture

- Systematic governance

1. Full-stack simulation workflow formulation

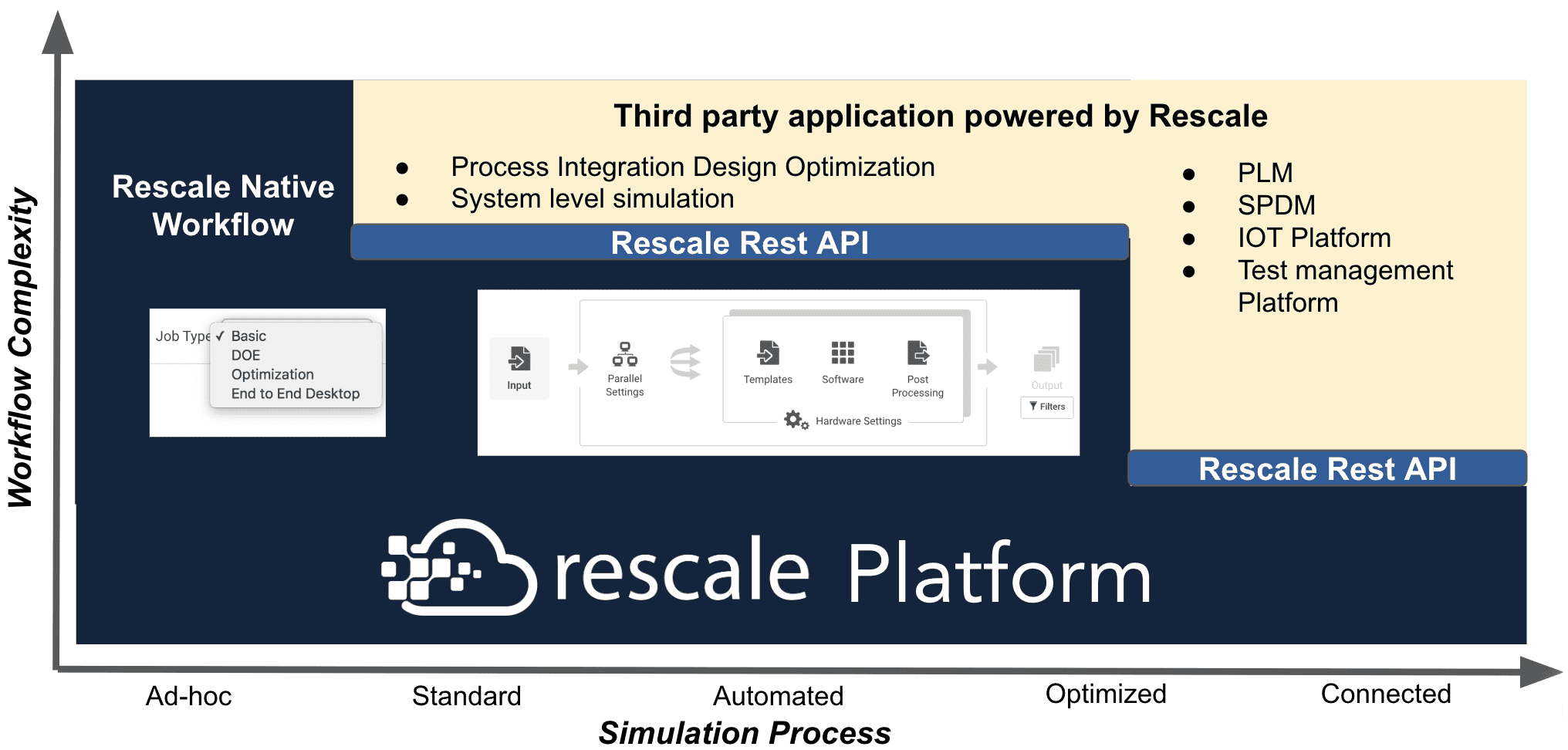

As engineering teams use multiple applications and utilities from various vendors, the ScaleX platform allows these disparate tools to interact with each other within the Rescale cloud network (See figure 2). Users access the best possible hardware for every step of the entire workflow.

Figure 2: Rescale powered Simulation workflow

Process flows can be authored within the ScaleX portal through the following frameworks:

- Basic workflow: to capture method with one or more applications that run on one cluster

- DOE workflow: to capture method with a set of independent jobs with varying input parameters that run on a grid cluster

- Optimization workflow: to capture method with a logical queue running on a cluster node with instructions to process a job in a series running on a grid cluster

- End-to-end desktop: to capture data issued from ad hoc analysis where the applications GUI is running from a visualization node, and submit and monitor jobs on a cluster

- Rescale rest API: to enable additional tools, linkage, and logic implementation through scripting.

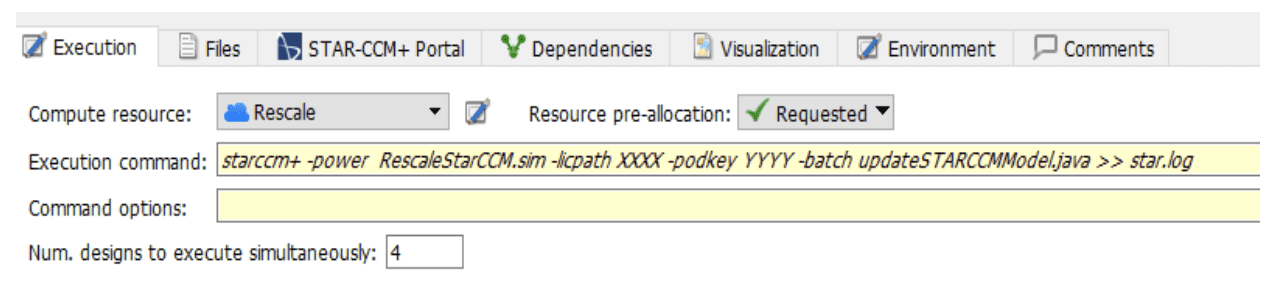

For more complex simulation workflows, Rescale can integrate with the submission framework of Process Integration and Design Optimization (PIDO) applications. Figure 3 illustrates the integration between Rescale and Siemens Heeds as an example.

Figure 3: Rescale integration with Heeds submission framework

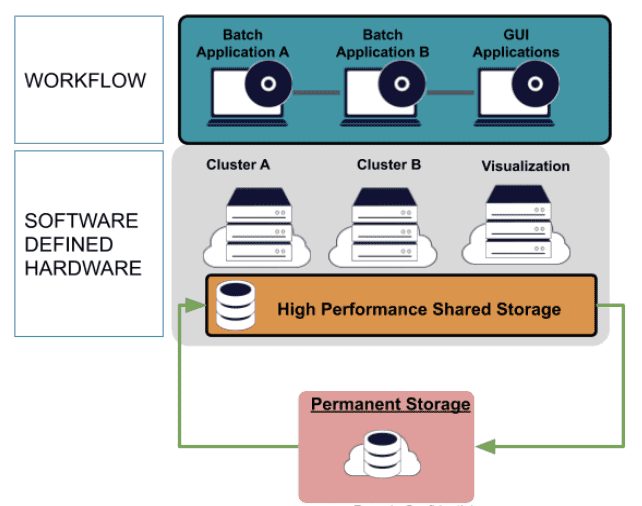

The full workflow can also be executed on high-performance storage so data are shared across multiple jobs, eliminating data movement during computation. The Rescale software-defined hardware approach enables the full-stack to be optimized, including both hardware and software of the entire simulation workflow (as illustrated in figure 4).

Figure 4: Reference architecture of Rescale software-defined hardware approach

This approach up-levels how complex workflows are defined, by aligning HPC workloads to their underlying compute and storage requirements, while considerably reducing how much time is spent on manual scripting and dependencies. For example, if a cloud service provider releases a new architecture that significantly speeds up the simulation process, the new core type can be simply swapped in. An engineer can also easily add a new step to train an artificial intelligence model on the data that results from the simulation process using GPUs. This inference model can then be deployed to designers to quickly iterate on the design, or it can be used in an optimization loop within ScaleX.

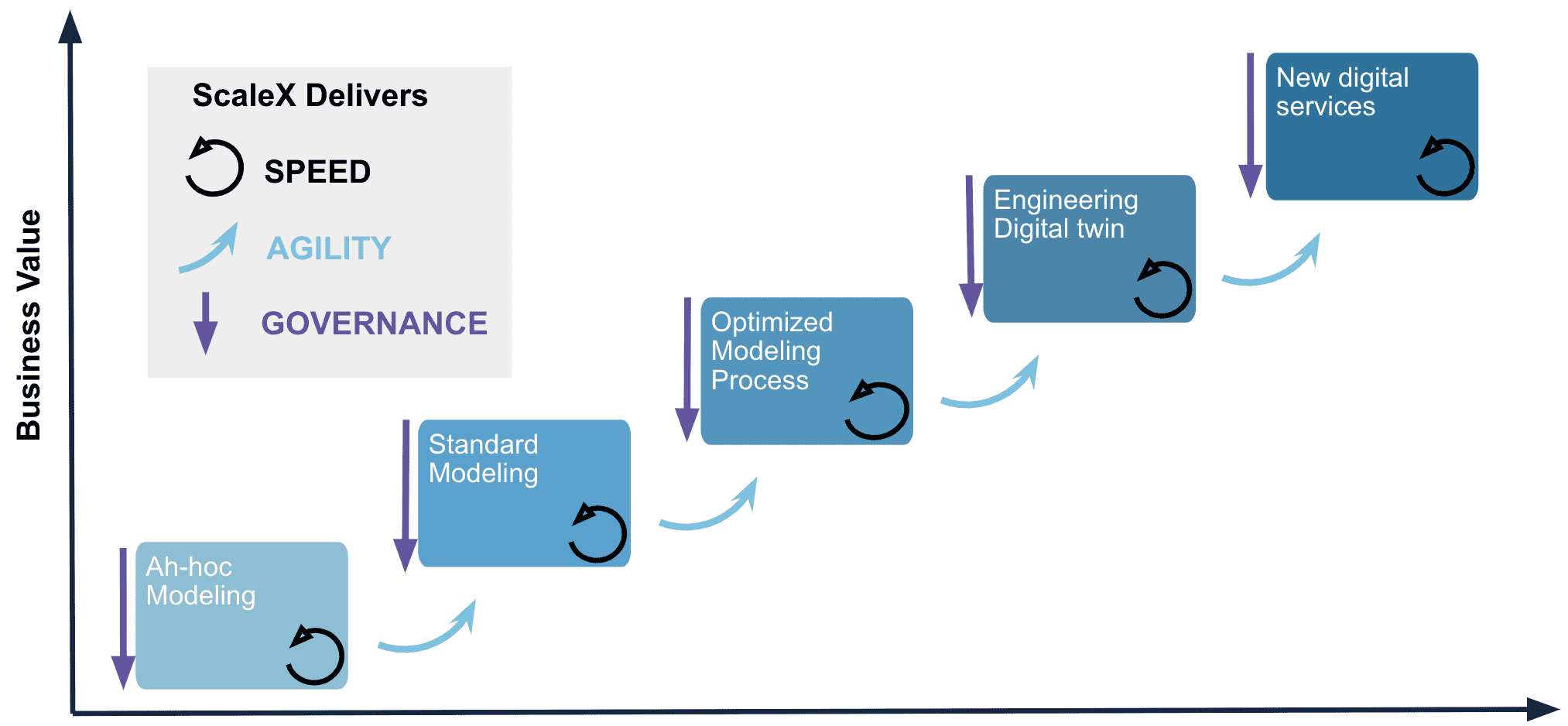

As summarized in figure 5, the ScaleX full-stack approach allows organizations to mature their simulation workflows from ad-hoc to fully automated while ensuring that the right amount of computing is provided along the way. With agile transformations, companies unlock simulation value to the business and rapidly fuel their process with innovation.

Figure 5: ScaleX platform delivers speed agility and governance to advance the simulation workflows.

2. Automatic HPC Data Capture for End-to-end Simulation Traceability

The complexity of managing data generated by HPC simulations continues to grow. The cloud offers new approaches to managing these data efficiently and surfacing key issues to different stakeholders more quickly.

CLOUD CONTROLS

As workflow is defined on ScaleX, data are automatically captured and encrypted into a permanent storage location and are visible by the user only unless specifically shared with others. At the time of the execution, data are automatically moved to the cluster block storage and once the workflow is completed, result data are moved to the permanent storage. Rescale also automates operational continuity to help resolve accidental deletion and site failures.

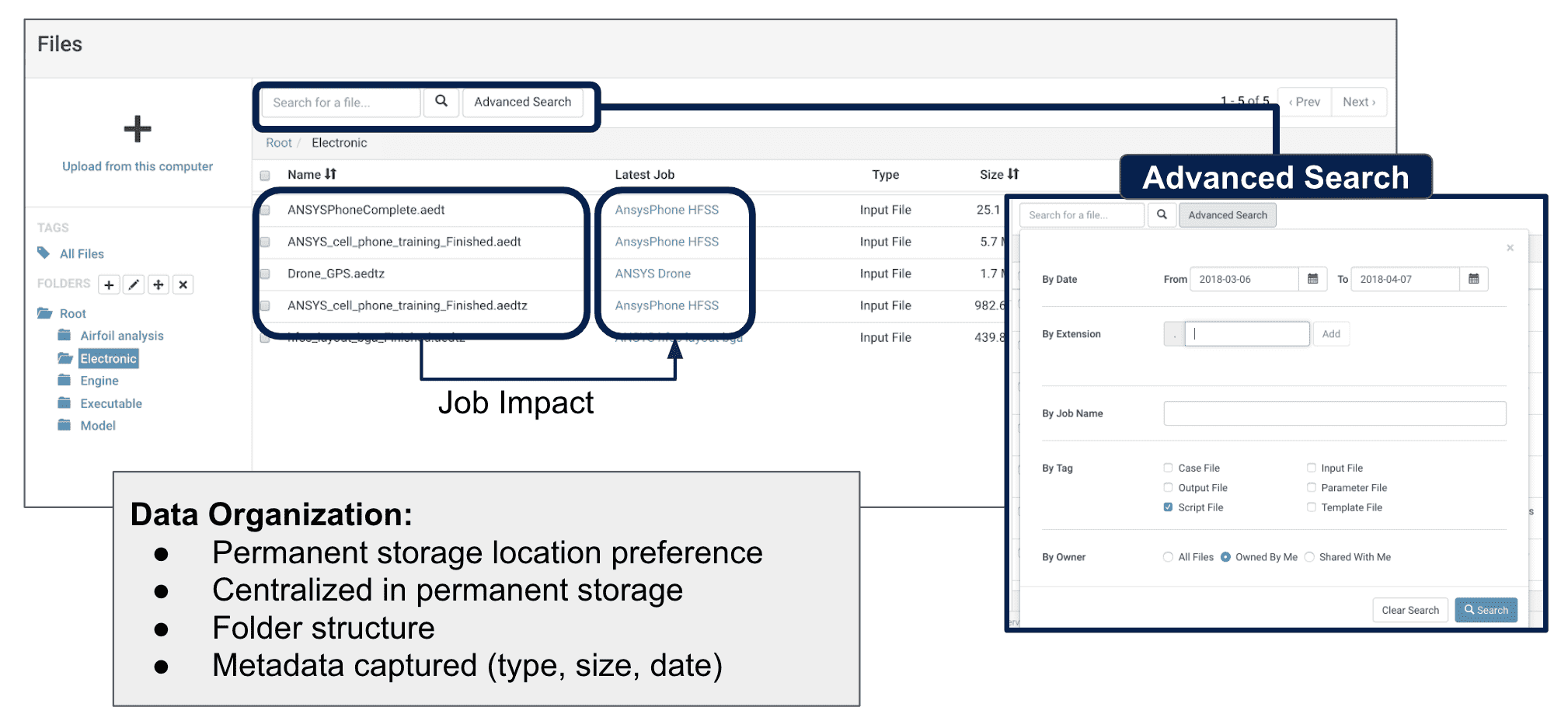

Engineers spend on average 15% of their time today looking for data. In the ScaleX platform, jobs previously defined and past data are searchable (see figure 6) and shareable. A user could, for instance, quickly retrieve a past job with similar attributes, eliminating analysis rework. It also enables how tribal knowledge is captured and easily shares this across the enterprise.

Figure 6: Data are natively referenced and searchable into ScaleX

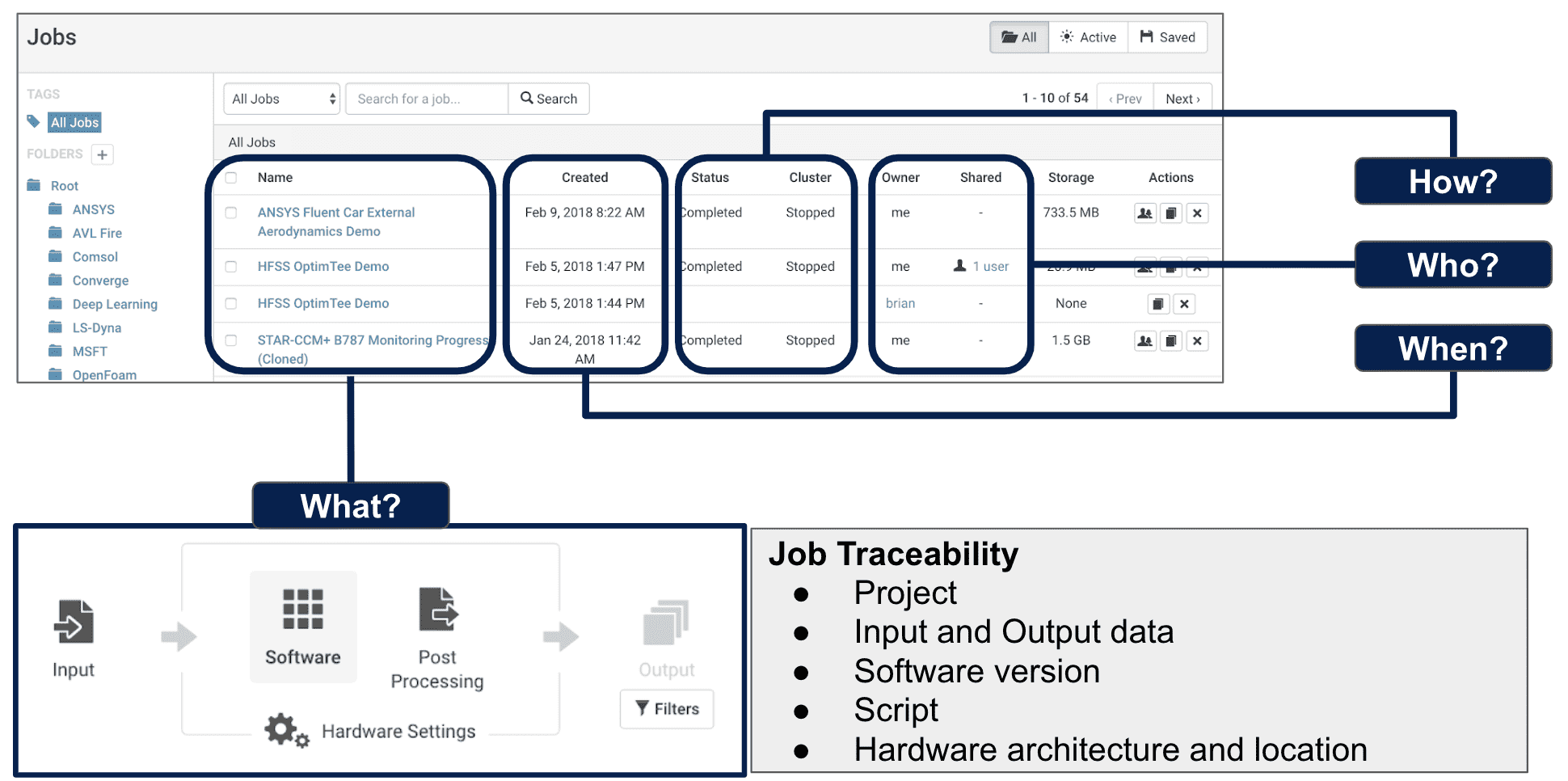

Because all information is stored in easily searchable cloud data stores, it becomes more traceable by users or project teams in the ScaleX user portal through the job dashboard and job object (Figure 7). Rescale job parameters such as the job ID can also be exposed via API within Simulation Lifecycle Management (SLM) or PLM solution to maintain continuity with other simulation (e.g. material or mesh part database) and design attributes (e.g. geometry version or product requirements).

Figure 7: Job dashboard and job workflow provides full traceability

In addition, the full-stack approaches capture HPC information about the application flow so this data is always managed in full context.

The Rescale platform structures, profiles and takes full advantage of data assets from HPC usage, and does this completely transparently from the users.

3. Globally optimized architecture for increased collaboration

Today’s organizations have engineering centers and customers distributed across the world. The complexity of the supply chain is increasing. A key component of a modern SPDM strategy is to facilitate teamwork across different locations. That means the HPC environment has to bring compute resources close to each user and overcome the challenges of simulation data fragmentation and data gravity globally.

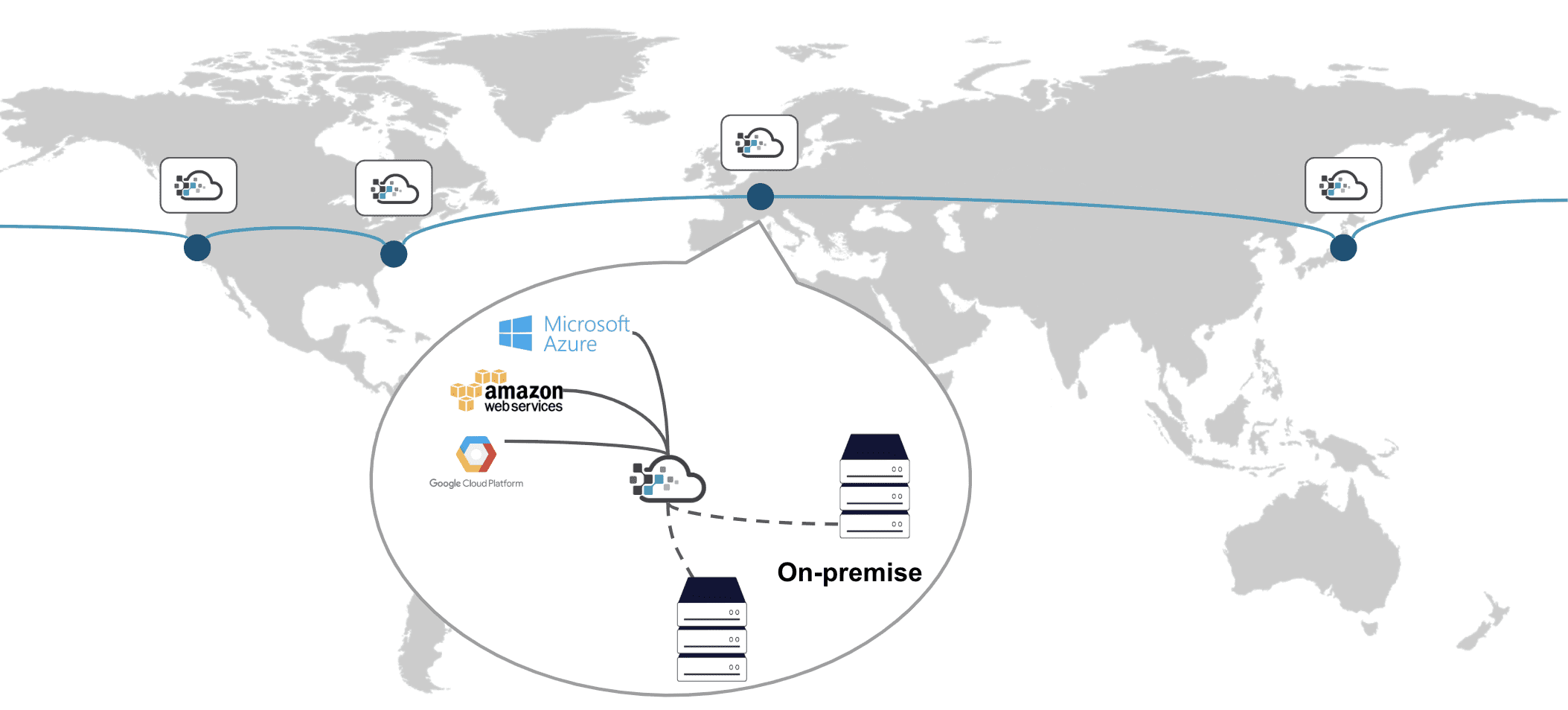

Rescale’s environment allows users to share models with the push-of-a-button with colleagues or external collaborators while providing the administrator the assurance of no IP leakage. With data centers across the world (See figure 8) and robust monitoring and visualization capabilities, the platform is built to make data globally accessible (geo-distribution) while minimizing data movement and maintaining traceability.

Figure 8: Global Rescale presence

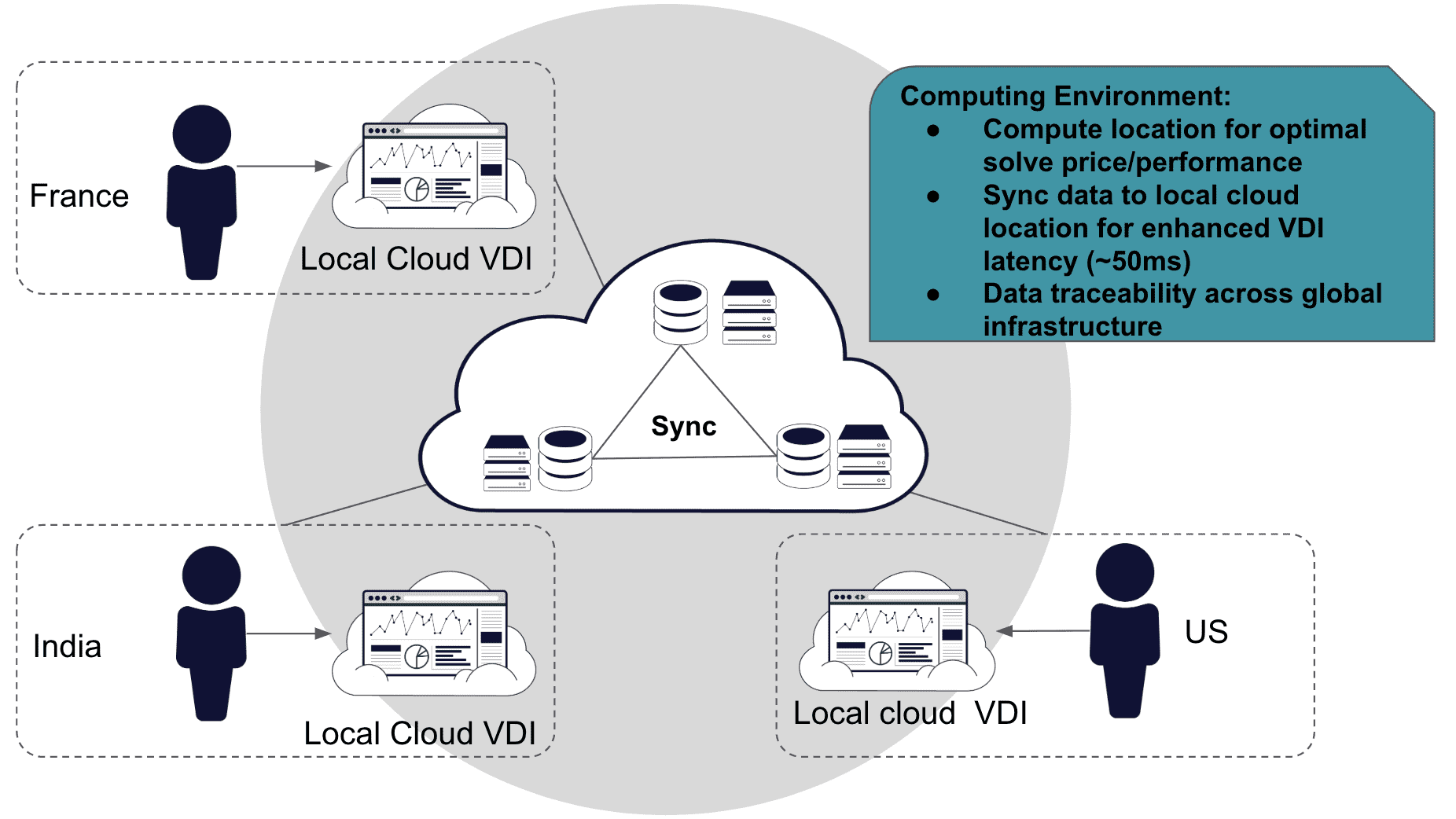

ScaleX Desktop minimizes the need to transfer data between the cloud and desktop computer by providing a powerful, visual experience with simultaneous access to all pre- and post-processing tools. It is an in-browser remote visualization capability that seamlessly integrates with the ScaleX platform and provides true interoperability with corporate networks. It can be configured with GPU acceleration and high memory nodes to improve the on-screen experience for the users. Also, a ScaleX user has the option to also submit a batch job across the world and automate the transfer of the output data into the data center closest to his/her collaborators to reduce latency for visualization (as shown in figure 9). This quick access to the model and results via the web makes design review meetings and engineering discussions much more interactive, as well.

Figure 9: Global architecture for low-latency remote visualization

With the ScaleX optimized global architecture, companies now can define HPC methods to interact with collaborators and offer new innovative HPC-based services to their customers. It is effective to realize a corporate vision around model-based system-engineering or digital twin architectures.

4. Systematic Governance and Security

Cloud align business and HPC users into a unified Governance model with full visibility and control. Once HPC resources are no longer isolated and integrated with transparent cloud controls, they can be aligned with a broader set of IT policies to maintain standardized control across the geographic platforms, business units, project teams, and individuals, and enforce best practices systematically.

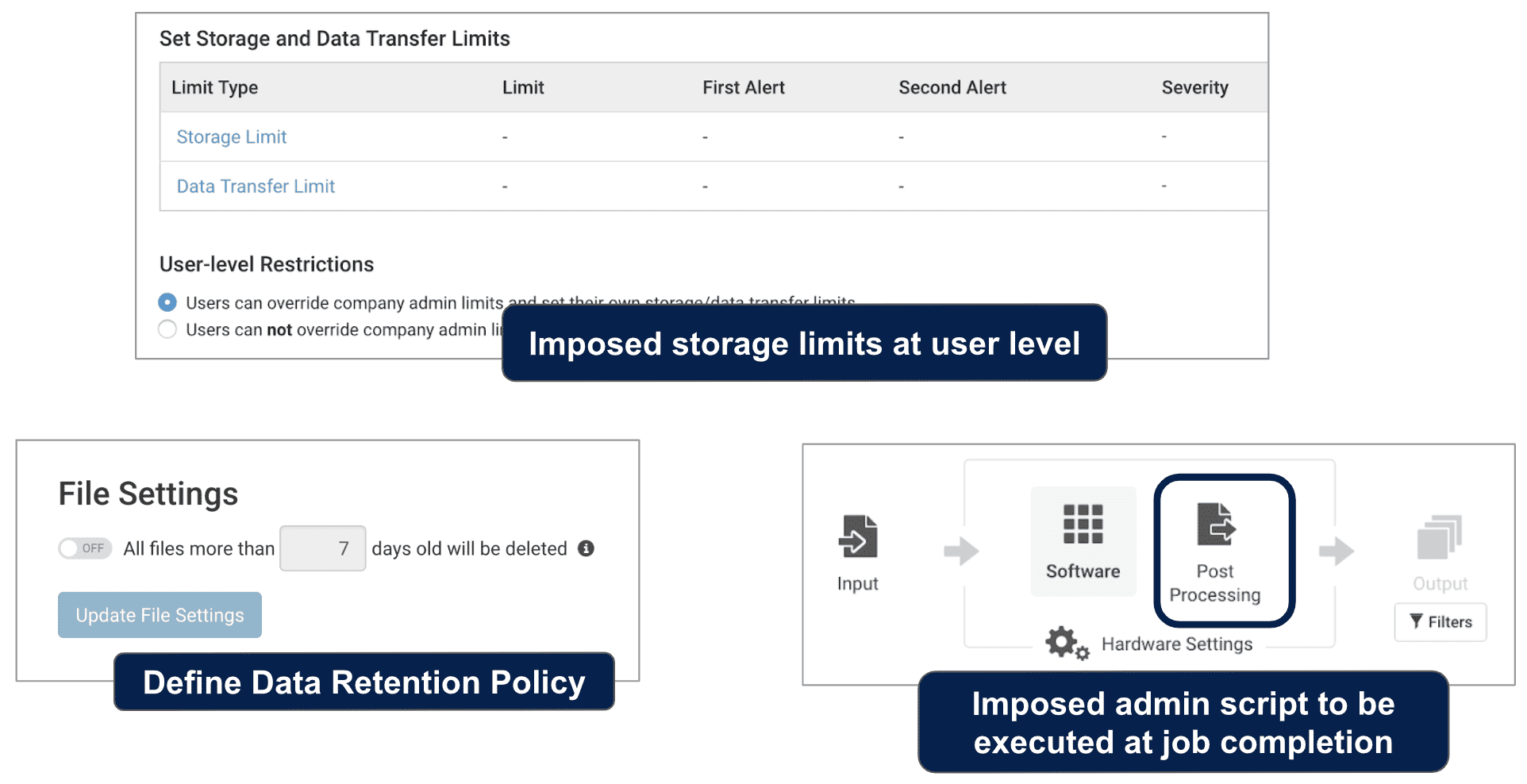

Using the ScaleX administrator portal, data governance policies and procedures (see figure 10) are defined and applied automatically and at different levels of the organization. As a result, the quality of the data retained by the company is easily maintained globally.

Figure 10: Examples of features available in the admin portal to define storage policies

When on-boarded into ScaleX, users are placed on a collaborative workspace with controlled access via multi-factor authorization and IP restriction rules. Data and projects are managed according to their level of sensitivity and in relation to each business unit’s governance directives. ScaleX users and workspaces can also be mapped to their identities in Microsoft Active Directory. Through the use of the workspace, ScaleX establishes a federated environment for the extended organization and governs collaboration while protecting simulation data IP.

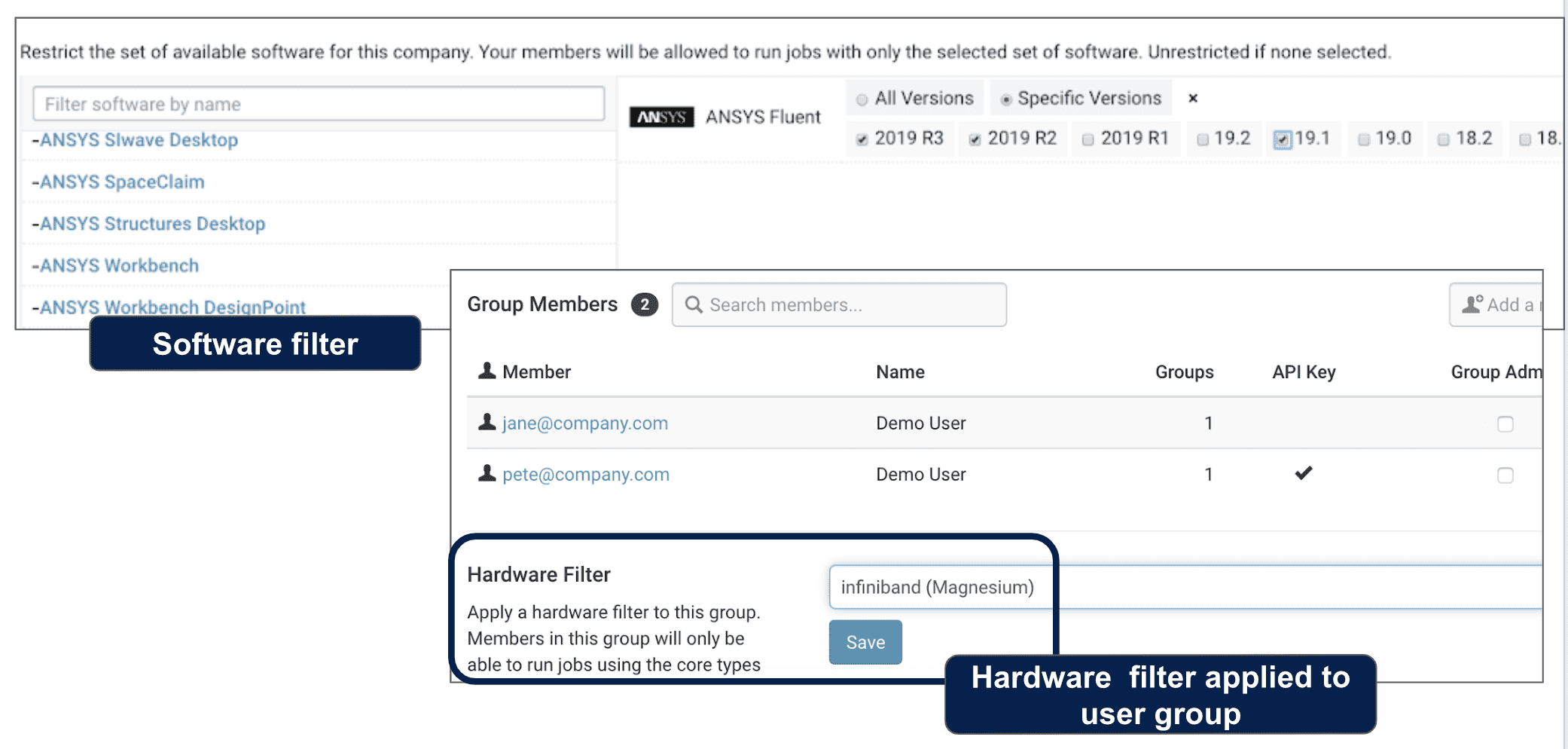

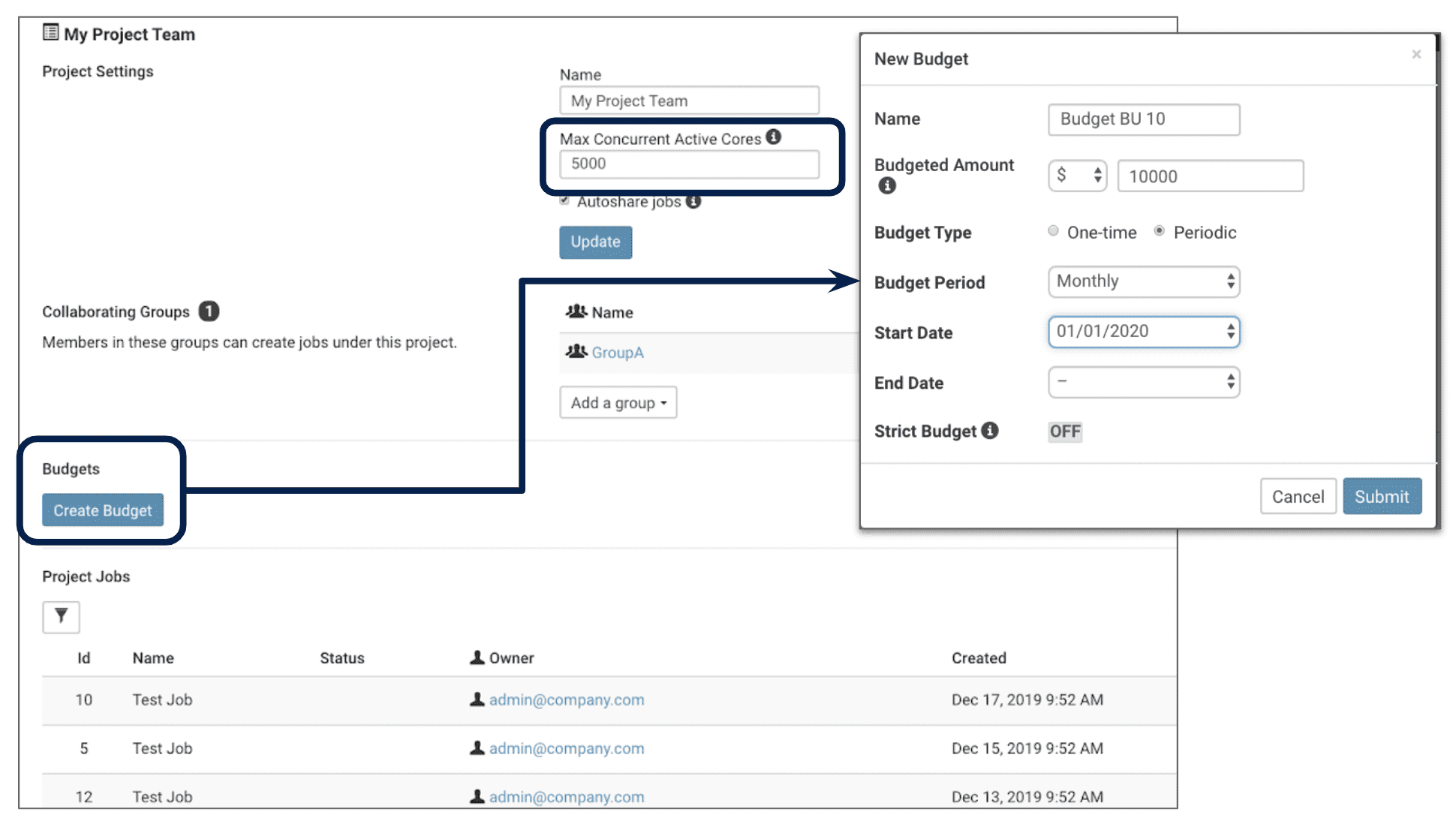

Through the administrative portal, IT also defines visibility across the enterprise over cloud resources and applications as illustrated in figure 11. The administrators can now standardize the full stack workflow that is executed by the users. IT can grant a user, a project team, or the full workspace access to the cloud, or to a specific software version as needed by the click of a button. IT can also further control spending by limiting the number of concurrent cores a project team can access at a given time, and also assign budgets (Figure 12). Budgets work by monitoring all types of costs (i.e. hardware, software, data transfer, storage, license proxy) and ensuring that the desired limits are not exceeded.

figure 11: Software and hardware filters govern the deployment of the full stack simulation method across the enterprise

Figure 12: Compute spending is controlled through the budget features and number of core access limiters.

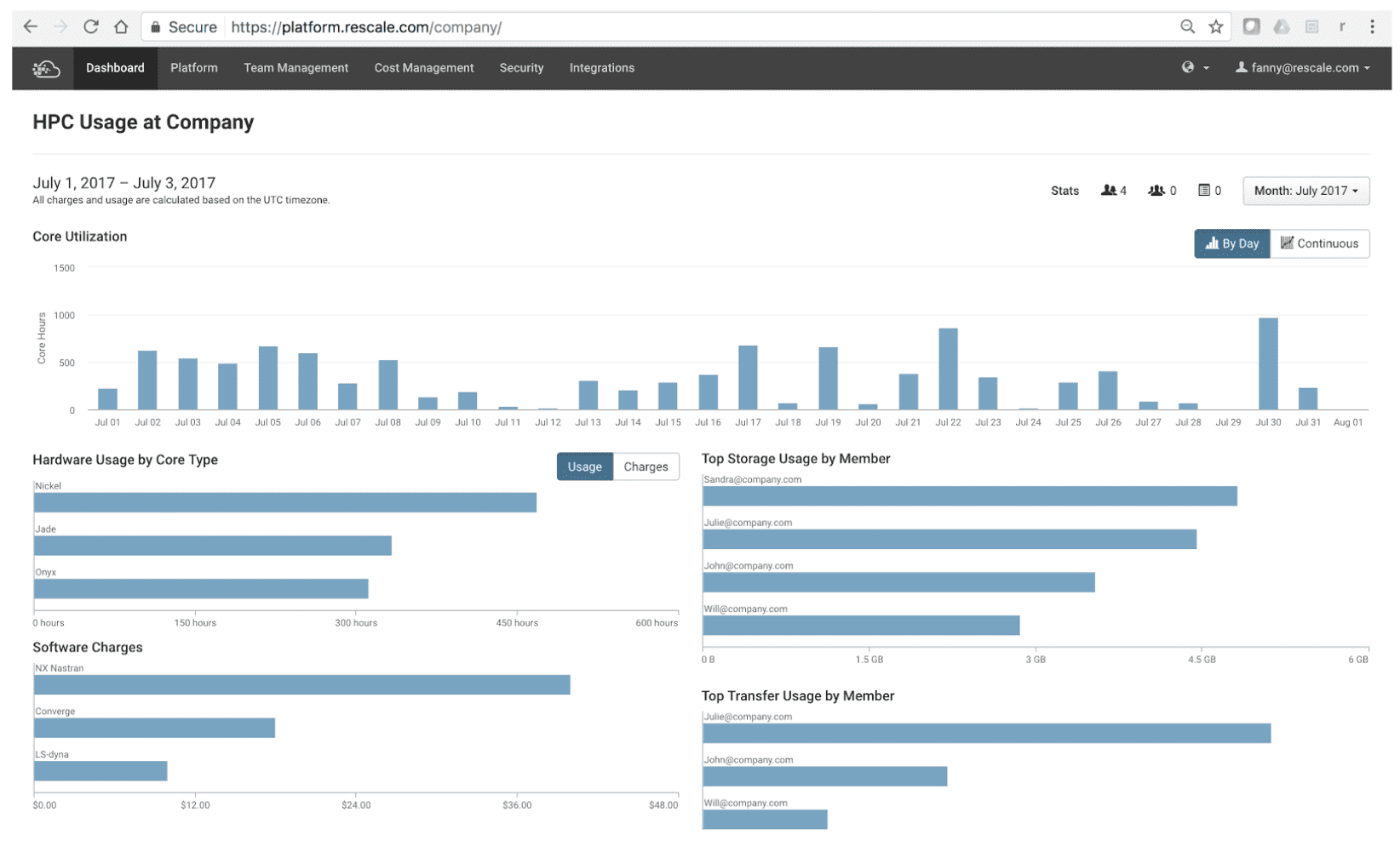

With access to data from HPC workloads in real-time, IT administrators and engineering leaders can make more informed decisions and continuously take action that drives efficiencies. The usage dashboarding (Figure 13) surfaces hardware and storage usage. For instance, an HPC admin can quickly identify which users or simulation workflows could benefit from a newly available core type, or stay ahead of any storage trend.

Figure 13: HPC usage dashboard

To conclude, we have seen the importance of building an SPDM solution on the premises of the open technology stack, data automation, and governance. However to be a leader and successfully deploy an SPDM solution, companies have to first and foremost invest in their simulation methods and ensure they are an entire part of its business operation.