클라우드 고성능 컴퓨팅이란 무엇입니까?

내용

클라우드 HPC란?

R&D 및 엔지니어링을 위한 일반적인 HPC 사용 사례

HPC 클라우드 배포를 위한 다양한 옵션은 무엇입니까?

고성능 컴퓨팅과 슈퍼컴퓨팅

더 자세히 알고 싶다. 클라우드 HPC?

클라우드 HPC

클라우드 HPC (High Performance Computing)

고성능 컴퓨팅(HPC)은 과학자, 연구원, 엔지니어가 가장 발전되고 시급한 현대 혁신을 개발하기 위해 사용하는 가장 영향력 있는 기술 중 하나입니다. HPC는 신제품을 디지털 모델링하거나 시뮬레이션하는 데 사용되는 하드웨어 및 소프트웨어 도구와 이들이 실제 세계에서 어떻게 작동하는지 설명하는 데 자주 사용됩니다. 1900년대 중반부터 새로운 엔지니어링 기술과 결합된 강력한 컴퓨터의 발명은 첨단 기술, 일상 제품, 심지어 도시 인프라까지 설계, 최적화 및 제조 방식을 변화시켰습니다.

수십 년 동안 HPC는 복잡한 슈퍼컴퓨터와 광범위한 하드웨어 및 소프트웨어 스택을 지원하는 데 필요한 상당한 자금 조달과 대규모 IT 팀을 갖춘 대부분의 대규모 조직에서 사용되었습니다. 클라우드 컴퓨팅 인프라를 사용하면 모든 회사는 온디맨드 구독 모델을 통해 어디에서나 특수 하드웨어의 사실상 무제한 조합에 액세스할 수 있으므로 이러한 혁신적인 기술에 대한 진입 장벽이 크게 낮아집니다.

HPC, 클라우드로 전환

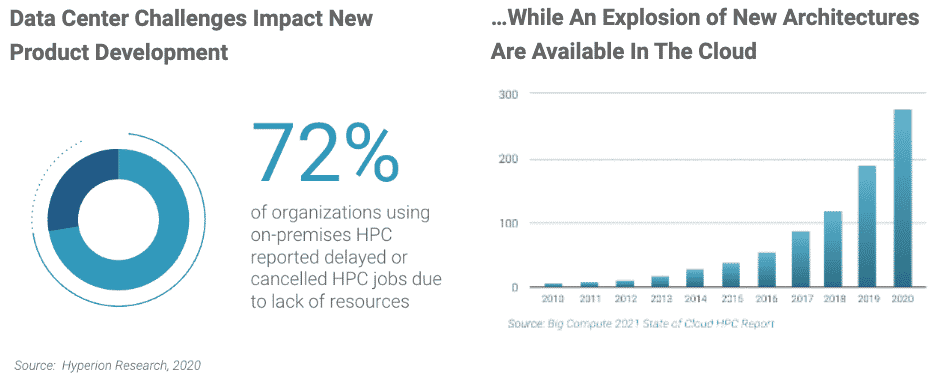

Cloud HPC는 배포 및 관리의 민첩성과 유연성이라는 이점으로 인해 기업이 연구 개발 이니셔티브에 리소스를 제공하는 방식을 변화시켰습니다. 온프레미스 슈퍼컴퓨터, HPC 클러스터 및 워크스테이션에 비해 클라우드 HPC는 더 빠르게 조달 및 배포할 수 있으며 특정 순간에 조직의 요구 사항에 따라 쉽게 수정할 수 있습니다. Cloud HPC를 사용하면 전략적이고 시간에 민감한 프로젝트를 시작하기 위해 값비싼 선행 자본과 시간 투자가 필요하지 않습니다. 현재 조사 대상 조직 중 약 70%가 HPC 업무에 클라우드를 통합했으며 50% 이상이 일관된 사용량을 보고했습니다(출처: 2022 컴퓨터 공학 현황 보고서).

글로벌 클라우드 인프라가 규모와 기능 면에서 성숙해짐에 따라 HPC 실무자, IT 관리자, 비즈니스 리더 모두 클라우드 HPC 채택의 추가적인 이점을 발견했습니다. 향상된 협업 및 사용자 경험부터 향상된 보고 및 관리에 이르기까지 클라우드 HPC는 최종 사용자 생산성을 향상하고 운영 위험을 줄이며 궁극적으로 출시 기간을 단축할 수 있습니다. 조직의 HPC 하드웨어 및 소프트웨어 포트폴리오는 점점 더 전문화되고 다양해지고 있으며, 이로 인해 많은 조직이 멀티클라우드 전략을 활용하여 애플리케이션 워크로드를 지속적으로 최적화하여 성능을 높이고 비용을 절감하고 있습니다. 컴퓨터 지원 엔지니어링에 대한 수요가 높아지고 새로운 AI/ML 기술이 폭발적으로 증가함에 따라 기술 리더들은 운영 위험을 줄이고 가장 중요한 프로젝트에 성공하는 데 필요한 하드웨어를 확보하기 위해 클라우드 HPC로 전환하고 있습니다.

컴퓨팅에 대한 전략적 투자를 고려 중인 비즈니스 리더는 전반적인 운영 효율성을 최적화하기 위해 클라우드 HPC를 고려해야 합니다. 클라우드가 엔터프라이즈 기술의 다른 많은 측면을 어떻게 변화시켰는지와 유사하게, 클라우드 HPC는 전 세계 어디에서나 브라우저의 단일 창에서 모든 즉각적인 액세스, 모니터링, 거버넌스 및 예측을 가능하게 합니다. 이러한 민첩성과 유연성은 조직이 클라우드 우선 HPC 전략을 채택할 때 새로운 발견을 가능하게 하는 강력한 경쟁 우위입니다.

R&D 및 엔지니어링을 위한 일반적인 HPC 사용 사례

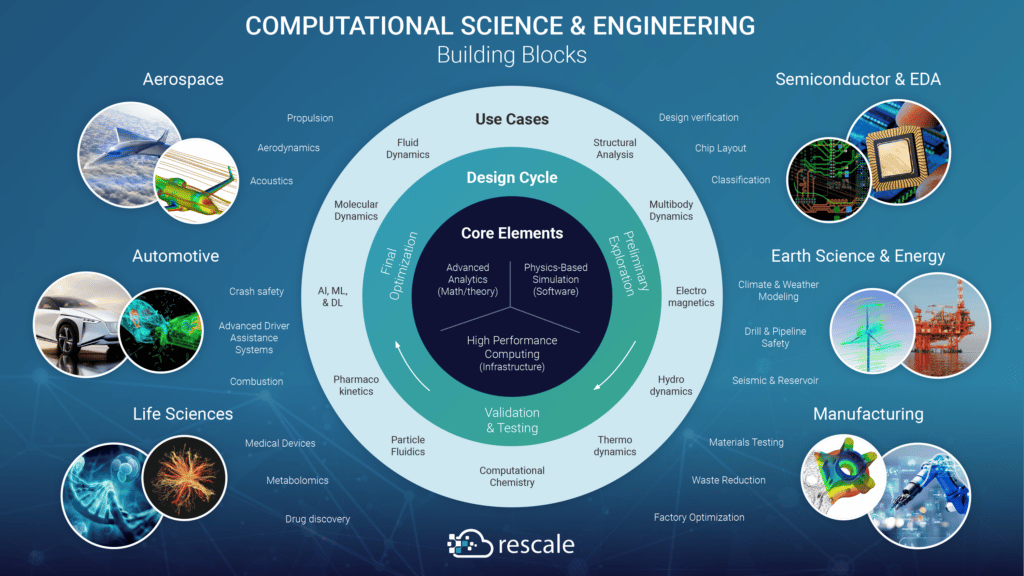

고성능 컴퓨팅은 엔지니어, 과학자, 연구원에게 실제 문제를 디지털 방식으로 해결하는 새로운 방법을 제공하므로 물리적으로 테스트하기 위해 값비싼 프로토타입을 제작할 필요가 없습니다. 전산 과학 및 공학 또는 컴퓨터 지원 공학이라고도 하는 계산 집약적 분석 및 시뮬레이션의 사용은 항공우주 및 방위, 자동차, 지구 과학, 에너지, 생명 과학, 제조 등 거의 모든 산업으로 확대되었습니다. 실제 상품을 만드는 것과 관련된 산업. 실무자가 더 빠른 속도로 새로운 발견으로 이어지는 새로운 응용 프로그램을 개발함에 따라 전산 과학 및 엔지니어링의 미래는 계속해서 새로운 사용 사례로 성장할 가능성이 높습니다. HPC는 위에서 언급한 업계의 다음 사용 사례와 관련이 있는 경우가 많습니다.

항공우주 산업

운송 또는 우주 탐사를 위한 새로운 항공기를 설계하고 제작하려면 HPC를 사용하여 거의 모든 구성 요소를 최적화해야 합니다. 양력과 추진력을 최대화하는 것부터 항력과 무게를 최소화하는 것까지 현대적인 R&D 노력은 더 많은 사람이 이용할 수 있도록 항공 여행을 더욱 안전하고 효율적으로 만드는 데 중점을 두고 있습니다. HPC는 일반적으로 공기 역학, 구조적 강성, 무게 및 발사 궤적(일명 탄도학)의 모든 것을 디지털 방식으로 테스트하는 데 사용되므로 임무 성공 확률이 그 어느 때보다 높아집니다. 항공우주 연구 및 개발의 현재 추세에는 수직 이착륙, 도시 항공 이동성, 전기화, 초음속 및 극초음속, 저붐 및 자율 시스템이 포함됩니다. 최근에는 디지털 시뮬레이션을 위한 HPC 접근성이 높아짐에 따라 우주 여행 및 우주 기반 산업의 상용화가 그 어느 때보다 효율적이 되었습니다. 더 빠른 속도와 지속 가능성이 우선시되므로, 항공우주 HPC 새로운 차량 추진제 및 재료 개발에 중요한 역할을 할 것이라는 점은 의심할 여지가 없습니다. 위성 및 기타 우주 인프라 발사는 저궤도(LEO), 달, 화성에 대한 임무를 실현 가능하고 경제적이며 안전하게 만들기 위한 R&D의 주요 초점으로 부상했습니다.

자동차 산업

오늘날의 자동차, 트럭, 기타 휠 차량만큼 세밀하게 조정된 R&D 및 엔지니어링을 받는 소비자 제품은 거의 없습니다. 전 세계적으로 수십억 대의 차량이 운행되고 있는 상황에서 자동차 성능과 효율성의 최적화는 오늘날 시장에서 경쟁력을 갖추는 데 매우 중요합니다. 보다 편안하고 안전하며 환경 친화적인 운전을 만들기 위해 더 나은 드라이브 트레인, 섀시, 안전 시스템, 새로운 전기화 및 자율 시스템을 구축하려는 경쟁에서 자동차 제조업체와 공급업체 사이에서 HPC 사용이 널리 퍼져 있습니다. 일반적인 예 자동차 HPC 전산유체역학(CFD)을 사용하여 엔진 연소를 개선하고 시각적 매력과 공기역학을 위해 차체와 휠 곡률을 최적화하는 등 미학과 편의성에도 사용됩니다. 다양한 재료의 음향적 영향과 사출 성형 및 주조 방법도 대량 생산이 시작되기 전에 디지털 방식으로 테스트됩니다. 차량의 자율성과 연결성이 향상됨에 따라 엔지니어들은 복잡한 환경을 안전하게 탐색하는 데 필요한 많은 센서, 안테나 및 반도체를 시뮬레이션하고 있습니다. 배터리 전원에 대한 화학 반응을 열역학적 특성과 함께 테스트하여 과열을 방지하고 결함으로 인한 잠재적인 리콜을 방지할 수 있습니다. 더 많은 신규 참가자가 생산 차량 테스트를 시작함에 따라 가상으로 수행되는 충돌 테스트에서 구조 분석을 위해 유한 요소 분석(FEA) 또는 유한 요소 모델링(FEM)을 사용하는 데 HPC 사용이 증가하고 있습니다. 이를 통해 완벽하게 작동하는 차량을 충돌시키기 위해 값비싼 장비를 구입하거나 임대할 필요성을 줄여 궁극적으로 비용을 절감하고 생명을 구할 수 있습니다.

에너지 산업

지난 100년 동안 전 세계 에너지 수요는 꾸준히 증가했으며 더 많은 국가가 급속히 현대화함에 따라 발전, 저장 및 배전 분야에서 새로운 혁신이 필요할 것입니다. 컴퓨팅은 화석 연료를 찾고 추출하는 데 사용되는 방법을 개선하는 것부터 전력망의 효율성을 개선하는 것까지 다양한 목표를 가지고 에너지 부문에서 광범위한 의미를 갖습니다. 현대 컴퓨팅이 지질 측량에 도입되기 전에는 유전 발견이 침습적이었던 반면, 현대 3D 지진 모델링 기술은 영향을 최소화하고 훨씬 더 정확했습니다. 전 세계 에너지 생산 및 소비의 대부분이 화석 연료(석탄, 석유, 천연 가스 및 기타 가스 포함)에서 나오므로 엔지니어들은 환경 외부 효과를 최소화하고 육상 및 해상을 포함한 이러한 시스템의 출력을 최대화하기 위해 열심히 노력해 왔습니다. -해안 석유 추출. 의심할 여지없이 새로운 청정 에너지원을 개발해야 할 필요성으로 인해 HPC에너지 풍력, 파도, 태양광 및 소규모 핵융합이나 핵융합과 같은 새로운 에너지원 개발을 위한 솔루션입니다. 새로운 지속 가능하고 재생 가능한 에너지원으로 전환함에 따라 이러한 에너지 시스템은 변동성 및 에너지 저장 기간에 맞게 최적화되어야 합니다. 기상학자와 과학자가 사용하는 많은 일반적인 기상 모델은 이제 오픈 소스이며 에너지 회사(및 기타 여러 산업)가 기상 변동성을 더 잘 예측하고 대응하는 데 도움이 됩니다. 엔지니어가 물리학 또는 화학 에너지 반응을 시뮬레이션해야 하는지 여부에 관계없이 더 깨끗한 내일을 위한 새로운 솔루션을 가상으로 구축하고 테스트하는 데 사용할 수 있는 다양한 소프트웨어가 있습니다.

생명 과학 산업

혁신 가속화의 가장 주목할만한 사례 중 하나는 유전체학 연구를 통해 HPC가 의약품, 의료기기, 맞춤형 의약품과 같은 제품에 미치는 영향을 확인할 수 있습니다. 생명 과학 및 이와 관련된 다양한 연구 개발 분야에서는 환자 결과를 획기적으로 개선할 수 있는 치료법, 백신 및 약물에 대한 새로운 가능성으로 이어지는 막대한 양의 데이터가 생성되었습니다. 과학자와 연구자들이 의존하는 생명과학 분야의 HPC 그 어느 때보다 더 많은 데이터를 처리하고 인체를 개인의 고유한 생물학까지 더 잘 이해하기 위해 노력하고 있습니다. 클라우드에서 주문형 HPC에 액세스하면 조직과 연구 조사에서 쉽게 세포 및 분자 수준에서 시뮬레이션과 모델링을 수행하여 질병과 치료법이 인체에 어떤 영향을 미칠지 예측할 수 있습니다. HPC의 이점을 활용하는 일반적인 분석에는 게놈 서열 분석, 분자 역학, 약동학, 유체 역학, 결정화, 단백질 접힘, 컴퓨터 및 양자 화학이 포함됩니다. 유연한 클라우드 리소스에 대한 액세스가 증가함에 따라 선도적인 제약 회사는 시험 시간을 단축하여 약물 발견과 출시 시간을 모두 가속화할 수 있습니다. 의료 및 제약 회사는 디지털 시뮬레이션을 사용하여 실패한 임상시험 횟수와 비효과적인 약품 제제 수를 줄여 R&D 효율성과 효율성을 높일 수도 있습니다. 많은 병원에서 디지털 변혁 계획이 진행되면서, 디지털 기록 보관(생물정보학)과 전산 연구의 사용은 점차 늘어나는 규모를 충족하기 위해 유연하고 안전한 리소스에 점점 더 의존하게 될 것입니다. 전문화된 최신 클라우드 기능을 활용함으로써 의사와 연구원은 이전에는 접근할 수 없었던 생명을 구하는 솔루션을 계속해서 발견할 수 있습니다.

제조 산업

인더스트리 4.0은 현대 제품 회사와 공급업체가 신제품을 시장에 출시하는 방식에 큰 변화를 가져왔습니다. 스마트 팩토리에서 연결된 장치에 이르기까지 제품 수명 주기의 모든 수준에서 디지털화는 설계자와 엔지니어에게 중요한 제품 특성을 추적할 수 있는 디지털 스레드를 제공합니다. 이 디지털 스레드는 첫 번째 프로토타입이 만들어지기 오래 전에 시작되는 경우가 많습니다. 이는 HPC를 사용하여 가장 작은 구성 요소까지 디지털 프로토타입을 제작하고 테스트함으로써 가능합니다. 이렇게 하면 생산이 시작될 때 기업은 제품이 예상대로 작동할 것이라는 확신을 가질 수 있습니다. 이러한 접근 방식은 소비재(CPG), 중공업, 전자 제품 및 대량 생산이 필요하거나 제조 비용이 많이 드는 거의 모든 종류의 제품에서 볼 수 있습니다. 엔지니어는 다중 물리학, 이산 요소(DEM), 유체 역학(CFD) 및 유한 요소 방법을 사용하여 시뮬레이션을 수행하는 경우가 많습니다. 이러한 전문 분석을 통해 특정 제품의 구조적 무결성, 열 관리, 성능(또는 고장)에 대한 더 나은 이해를 제공하고 제조 프로세스를 자동화할 수 있는 방법을 예측하는 데 도움이 됩니다. 적층 제조(3D 프린팅), 디지털 트윈, 사물 인터넷(IoT)과 같은 새로운 제조 기술이 온라인에 등장하면서 HPC 제조 제품 품질, 안전, 공장 효율성을 확보하기 위해 어디에 투자해야 할지 예측하는 사례가 늘어나고 있습니다. 제조 회사는 컴퓨팅 요구 사항이 다양하고 대규모 팀과 프로젝트를 위해 특정 하드웨어 및 소프트웨어에 대한 유연한 액세스가 필요하기 때문에 클라우드 HPC를 사용하면 고유한 이점을 얻을 수 있다는 점에 유의하는 것이 중요합니다. 더 나은 제품을 만드는 것 외에도 고객 만족도를 높이고 보증 위험을 줄이는 것 외에도 기업은 프로세스를 검토하고 프로세스를 보다 지속 가능하고 안전하며 효율적으로 만들 수 있는 방법도 검토하고 있습니다.

Cloud HPC 배포를 위한 다양한 옵션이 있습니까?

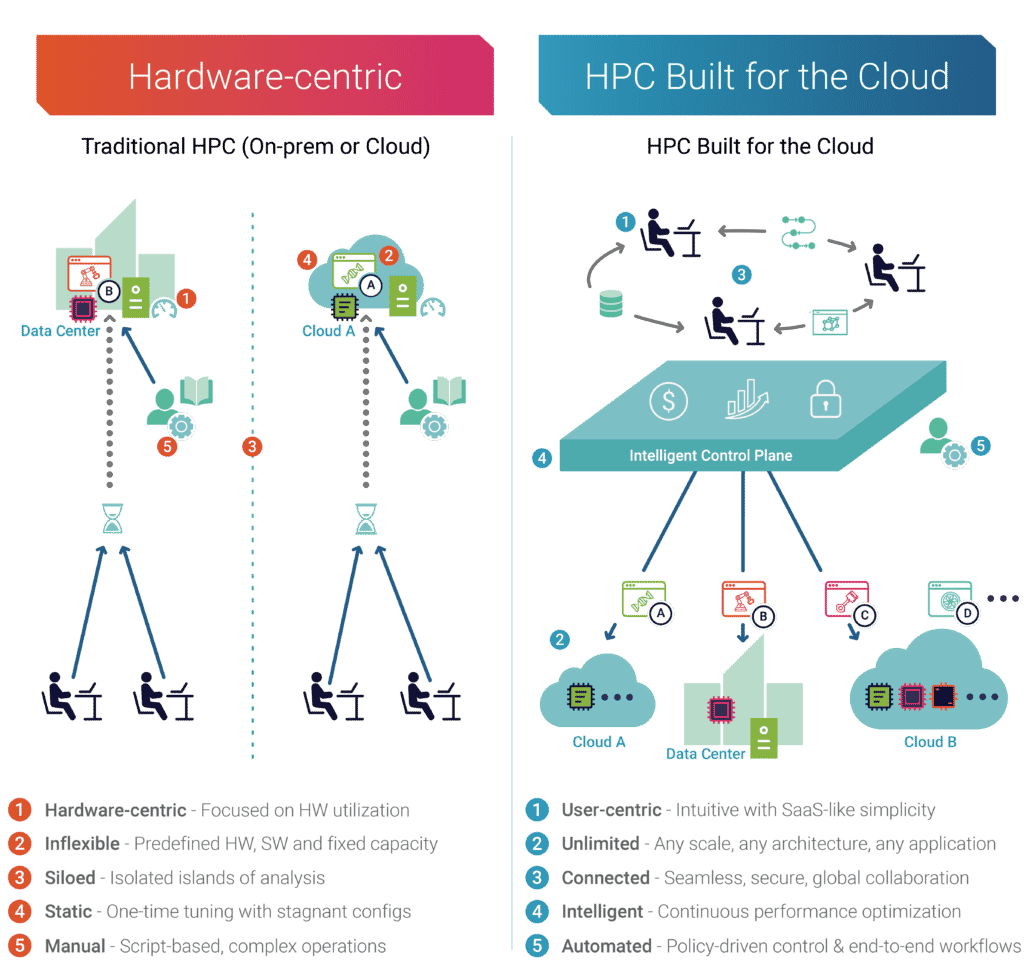

클라우드 HPC로의 경로를 결정할 때 IT 및 사업부 의사 결정자는 가능한 배포 모델과 함께 동기와 원하는 결과를 이해하는 것이 중요합니다. 클라우드 혁신의 일반적인 동기에는 유연성, 선택권, 단순화가 포함되지만, 제공되는 많은 서비스는 클라우드의 잠재력을 최대한 활용하지 못합니다. 다음 네 가지 모델은 클라우드를 시작할 수 있는 다양한 방법을 간략하게 설명합니다. 그러나 구매 위원회는 주의하세요. 이 모델들이 모두 동일하게 만들어지는 것은 아닙니다.

옵션 1 - 스스로 "리프트 앤 시프트" 클라우드 HPC 접근 방식:

온프레미스 HPC "클러스터"에 투자하는 데 익숙한 조직은 비슷한 방식으로 클라우드 전략에 접근할 수 있습니다. 여기에는 특정 할당량의 정적 컴퓨팅 리소스를 구매하는 것이 포함되지만, 이제 물리적 하드웨어 대신 퍼블릭 클라우드 컴퓨팅 인프라를 활용("리프트 앤 시프트")하고 있습니다. 구매한 용량의 양은 장기간에 걸쳐 필요한 컴퓨팅의 사전 추정치를 기준으로 합니다. DIY 리프트 앤 시프트 고객은 단지 온프레미스에 있었던 것과 동일한 기술 스택을 다시 설계하고 직접 관리하는 것을 목표로 하므로 새로운 환경에서 구축하는 데 리드 타임이 길고 복잡성이 발생합니다.

Cloud HPC 의사결정자와 관리자는 일반적으로 최종 사용자가 워크로드를 실행하기 위해 긴 대기열에서 기다려야 했던 온프레미스 시스템의 부족 문제를 재현하는 높은 활용도 최적화와 같은 레거시 측정항목을 함께 가져옵니다. 비용을 통제하기 위한 노력의 일환으로 조직은 여러 CSP에 걸쳐 비용 대비 성능을 최적화함으로써 얻을 수 있는 잠재적인 이점을 고려하지 않고 개별 CSP와 장기적인 약정을 추구하는 경우가 많습니다. 최근 몇 년 동안 전문화된 하드웨어 옵션이 폭발적으로 증가함에 따라 멀티 클라우드 HPC 고객은 보다 효율적인 하드웨어-소프트웨어 조합을 지속적으로 찾아 분기 대비 30% 더 많은 가치를 실현할 수 있었습니다.

DIY 고객이 직면한 다른 과제로는 다양한 소프트웨어 포트폴리오의 지속적인 유지 관리, 클라우드 공급자가 기본적으로 제공하지 않는 추가 보고 및 제어 계층 구축 등이 있습니다. 성능 분석 및 보안 보고가 필요한 기업 팀은 모범 사례를 충족하기 위해 이러한 구성 요소를 처음부터 구축해야 할 가능성이 높습니다. 클라우드의 많은 장점이 없는 복잡성의 단점을 고려한 결과 DIY 리프트 앤 시프트 모델은 대부분의 조직에서 가치가 있는 것보다 더 많은 작업이 될 수 있습니다.

옵션 2 – ISV 클라우드 HPC 접근 방식:

ISV 클라우드 HPC 장점

과학자, 연구원 및 엔지니어는 오랫동안 계산 집약적인 시뮬레이션 및 모델링 소프트웨어에 의존해 왔습니다. 이 소프트웨어의 접근성을 높이기 위한 노력의 일환으로 몇몇 주요 ISV(독립 소프트웨어) 공급업체는 인프라 제공업체와 제휴하여 온디맨드 방식으로 워크로드를 실행하는 데 필요한 소프트웨어 라이선스와 필요한 컴퓨팅이 모두 포함된 패키지를 제공했습니다. 이러한 구독 모델은 가격 유연성을 통해 소프트웨어 사용자에게 단순성을 제공합니다.

ISV 클라우드 HPC 단점

ISV 클라우드 제품의 단점은 성장하는 팀 및/또는 성숙한 HPC 관행을 갖춘 조직에서 금방 드러납니다. 이 클라우드 HPC 모델을 실행 불가능하게 만드는 주요 추세는 사용자가 활용하는 소프트웨어 수가 급격히 증가하여 상용(ISV), 오픈 소스(무료) 및 맞춤형(독점) 소프트웨어가 다양하게 혼합된다는 것입니다. HPC 실무자가 있는 일반적인 조직은 여러 소프트웨어를 실행하며 팀의 67%는 2~5개 소프트웨어를 실행하고 12%는 6개 이상을 실행합니다(출처: 전산공학 현황 보고서).

또한 이러한 ISV 제품 중 다수는 특정 CSP 파트너십에 묶여 최종 사용자에게 기본 인프라 및 비용 경제성에 대한 선택권이나 가시성을 거의 제공하지 않습니다.

옵션 3 – 관리형 클라우드 HPC 접근 방식:

이 클라우드 HPC 카테고리는 고객이 아웃소싱할 수 있는 다양한 관리형 클라우드 제품 버킷입니다. HPC 인프라예약된 인프라 모델이나 프라이빗 인프라 모델을 계속 유지합니다. 이러한 제품의 기반에는 고객에게 상시 전용 인프라에 대한 인식을 제공할 수 있는 베어메탈 프라이빗 클라우드 아키텍처가 있는 경우가 많습니다. 현실은 이러한 서비스가 일반적으로 고정 용량 계약이며 클라우드 유연성의 진정한 이점이 부족하다는 것입니다. 일부 공급자는 단일, 때로는 이중 클라우드 서비스를 제공하지만 가장 효율적인 클라우드 또는 컴퓨팅 아키텍처로 동적으로 전환할 수 있는 플랫폼 인텔리전스가 부족합니다. 대부분의 경우 관리형 클라우드 HPC 서비스는 확장이나 확장이 불가능할 수 있는 특정 애플리케이션이나 사용 사례용으로만 설계되었습니다.

옵션 4 – 클라우드 자동화 플랫폼 접근 방식:

비즈니스 요구 사항에 맞춰 적응하고 성장할 수 있는 클라우드 HPC 시스템을 배포하려면 복잡성을 추상화하는 동시에 클라우드의 가장 좋은 부분을 활용할 수 있는 솔루션이 필요합니다. 이것이 Rescale™이 목적에 맞게 구축된 솔루션 제공을 믿는 이유입니다. 클라우드용으로 구축된 HPC. 이를 달성하기 위해 Rescale의 다양한 팀은 모든 산업 분야의 고객이 최고의 솔루션에 액세스할 수 있도록 엔드 투 엔드 솔루션을 구성했습니다. HPC 기술사용할 수 있습니다.

클라우드용으로 구축된 HPC를 정의하기 위해 Rescale은 전략적 클라우드 HPC의 5가지 주요 속성을 살펴봅니다.

- 사용자 중심– 항상 프로젝트 및 비즈니스 목표를 염두에 두고 사용자에게 온디맨드 방식으로 리소스를 제공하는 솔루션입니다. 이는 또한 그래픽 사용자 인터페이스(GUI), 애플리케이션 프로그래밍 인터페이스(API), 명령줄 인터페이스(CLI)와 같은 유연한 작업 제출 옵션을 통해 더 많은 엔지니어와 과학자에 대한 액세스를 높이기 위해 사용자 경험을 단순화한다는 것을 의미합니다.

- 제한 없는– 모든 애플리케이션이 모든 아키텍처(멀티 클라우드, 하이브리드/온프레미스)에서 실행될 수 있도록 지원하는 솔루션입니다.

- 광범위한 연결– 분리된 분석을 통합하고 협업을 촉진하며 모범 사례를 병합하는 솔루션입니다.

- 지능형– 비즈니스 목표에 맞춰 최적의 소프트웨어-하드웨어 조합과 최적의 아키텍처 구성을 추천하는 솔루션입니다.

- 자동화– 복잡성과 반복적인 관리 작업을 제거하는 동시에 최종 사용자 워크플로를 가속화하고 예산 및 보안 관련 운영 위험을 최소화하는 솔루션입니다.

요약하자면, 클라우드용으로 구축된 접근 방식은 R&D 속도와 효율성을 최적화하고 단순히 활용되는 인프라가 아닌 비즈니스 요구 사항에 초점을 맞추는 목표에서 시작됩니다. 이 접근 방식은 조직이 팀의 상호 작용 방식을 간소화하고 병목 현상을 제거하며 새로운 혁신을 추진하는 것을 목표로 하는 디지털 혁신의 목표에 부합하도록 돕습니다.

클라우드용으로 구축된 HPC 접근 방식에 대해 자세히 알아보려면 지금 전체 eBook을 다운로드하세요.

고성능 컴퓨팅(HPC)과 슈퍼컴퓨팅 – 차이점이 있으며 어느 것이 기업에 가장 적합합니까?

'고성능 컴퓨팅'과 '슈퍼컴퓨팅'이라는 단어는 종종 같은 의미로 사용됩니다. 하지만 실제로 같은 것일까요? 시작하기 전에 정의와 접근 방식을 고려하는 것이 중요합니다.

수퍼 컴퓨팅

슈퍼컴퓨팅은 슈퍼컴퓨터를 사용하여 실제 시나리오를 계산하거나 시뮬레이션하는 프로세스입니다. 슈퍼컴퓨터는 수만 개의 프로세서로 구성되며, 이 모든 프로세서가 함께 작동하여 현실 세계에서 수행하기에는 너무 어렵고, 비용이 많이 들고, 시간 집약적인 크고 복잡한 작업을 완료합니다.

슈퍼컴퓨터는 다음을 포함한 다양한 분야의 광범위한 시뮬레이션에 사용됩니다. 일기 예보, 분자 역학, 물리적 시뮬레이션 및 기타 산업. 대규모 학술 기관 및 정부 지원 기관은 대규모 연구 프로젝트를 수행하기 위해 슈퍼컴퓨터를 구축하는 경우가 많습니다. 슈퍼컴퓨터를 사용하려면 구성 요소 및 시설에 대한 상당한 초기 투자, IT 및 시설에 대한 전문 지식, 구현 및 지속적인 유지 관리에 소요되는 시간이 필요합니다.

클라우드 HPC (High Performance Computing)

고성능 컴퓨팅은 슈퍼컴퓨터를 포함할 수 있는 광범위한 용어이지만 일반적으로 특정 응용 프로그램이나 작업 유형을 위해 조립되는 특수 구성 요소를 포함하는 통합된 고성능 컴퓨터 또는 서버 클러스터의 사용을 의미합니다.

HPC는 슈퍼컴퓨팅과 동일한 종류의 계산 문제를 해결하는 데 사용되는 경우가 많지만, 엔지니어의 워크스테이션에서 대규모 데이터 센터까지 수행할 수 있기 때문에 HPC가 더 일반적으로 사용됩니다. 산업 전반에 걸쳐 기술 제공업체는 엔지니어, 과학자, 연구원의 증가하는 HPC 요구 사항을 충족하기 위한 새로운 도구를 개발하고 있습니다. 최근 전문 소프트웨어 및 하드웨어가 확산되면서 기업은 복잡한 기술 스택을 단순화하고 효율성을 극대화하기 위한 전략을 고안하게 되었습니다.

비즈니스를 위한 올바른 선택

기업이 경쟁 우위를 확보하기 위해 향상된 컴퓨팅 성능에 의존하므로 기술 리더는 인재, 소프트웨어, 지적 재산과 같은 기존 리소스의 가치를 극대화하는 솔루션을 선택해야 합니다. 오늘날 대부분의 기업은 비즈니스 요구 사항에 맞게 특정 목적에 맞게 구축되고 규모를 조정할 수 있다는 이유로 HPC 접근 방식을 선택하는 반면, 슈퍼컴퓨터는 일반적으로 정적 아키텍처, 상당한 투자 및 장기간의 사용 기간을 특징으로 합니다. 클라우드 HPC 및 서비스형 HPC 제품은 조직이 이전에 너무 복잡하거나 비용이 너무 많이 드는 솔루션이었던 고급 컴퓨팅 작업을 배포하기 위한 진입 장벽을 낮췄습니다. 더 많은 컴퓨팅 엔지니어링 리소스에 대한 접근성이 향상되면 기업은 R&D 프로젝트를 가속화하고 예산 목표를 달성할 수도 있습니다.