5 Winning Strategies to Accelerate Engineering: Cloud HPC is Intelligent (Part 4 of 5)

- Editors note: This post is the introduction to a 6 part blog series. Read the full report here.

4. The Five Winning Strategies: From Static to Intelligent

Traditional HPC is Static

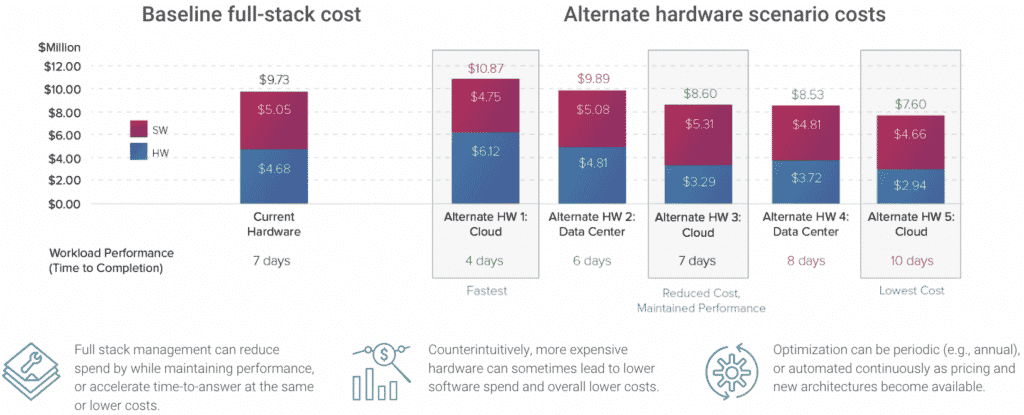

Given the traditional tightly-coupled approach to HPC, performance and analytics intelligence was not critical. Configurations, once stable and tuned, we not changed often, and there was no reason to, also giving little reason to invest in software/hardware performance analytics. Maintaining this approach naturally leads to challenges in a dynamic environment like the cloud. In the cloud, new hardware options are added frequently, each delivering potential gains (or losses) to application performance. HPC admins can not reasonably provide coverage for navigating this world without the benefit of intelligence.

HPC Built for the Cloud is Intelligent

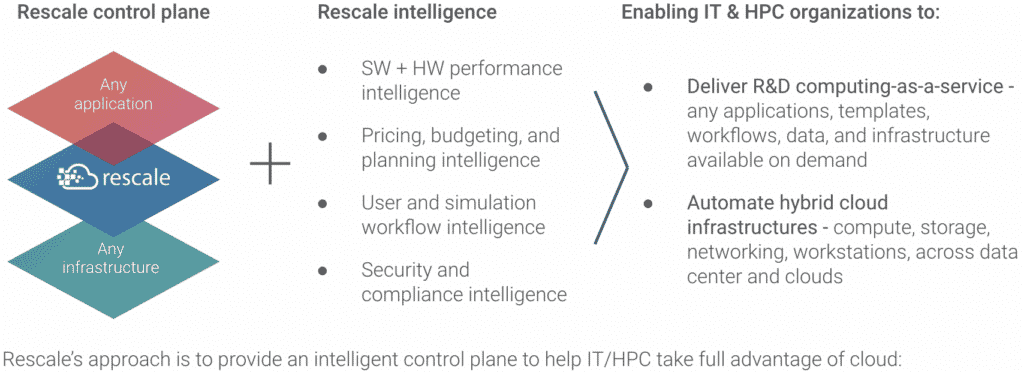

A built-for-the-cloud approach to HPC starts from recognizing that intelligence is required to get the most from cloud environments. In an environment where new options are added frequently and changes can occur, having intelligence on the performance, cost, and other aspects of the HPC environment is key to achieving business outcomes and managing risk. Examples of intelligence may include:

- Software and hardware performance intelligence – How do different architectures impact the full stack costs of workloads, including software licensing and infrastructure costs, as well as time-to-solve?

- Pricing, budgeting and planning intelligence – Are projects running on time and on budget? Is projected growth in HPC use consistent with budgets and expected HW performance improvements?

- User and simulation workflow intelligence – Are users running workloads properly with the appropriate software versions and data? Are they using the proper templates and computational pipelines?

- Security and compliance intelligence – From where are users logging in worldwide? What data are they downloading and uploading against the HPC environment? Are password and timeout policies in force?

As more users and software/hardware architectures need to be support by HPC organizations, intelligence becomes critical to capturing the value of cloud-based HPC. This intelligence also needs to be coupled with an automation engine to help make better technical, business, and operational choices that can otherwise be unmanageable for engineering and IT organizations.

Rescale’s approach is to provide an intelligent control plane to help IT/HPC take full advantage of cloud:

- Financial controls – Enables budget monitoring, alerting, and enforcement, helping to give business leaders visibility into the value and impact of HPC jobs

- Security and access controls – Provides granular controls over users, access, software versions, and workflows, to meet compliance and security requirements

- Multi-team controls – Enables secure and shared workspaces for better engineering collaboration inside and between companies

- Software and software license controls – Enables IT teams to manage their application portfolios to ensure projects efficiently use the right license at the right time

- Infrastructure architecture controls – Defines enterprise policies on which architectures can be used by which teams to maximize cost/performance or simulation throughput, based on performance intelligence

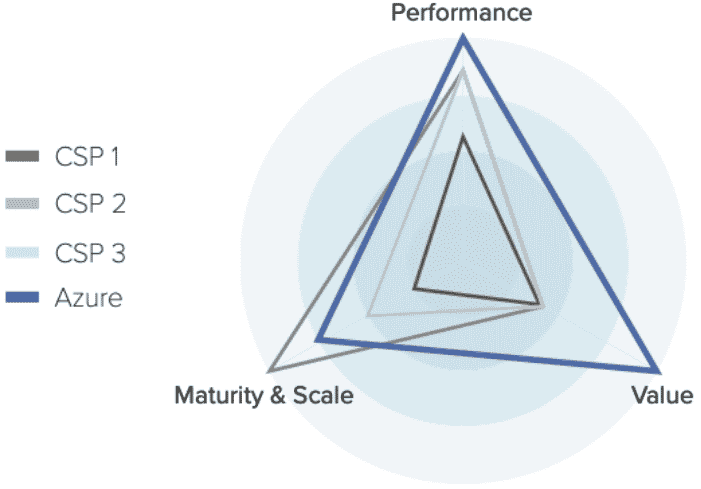

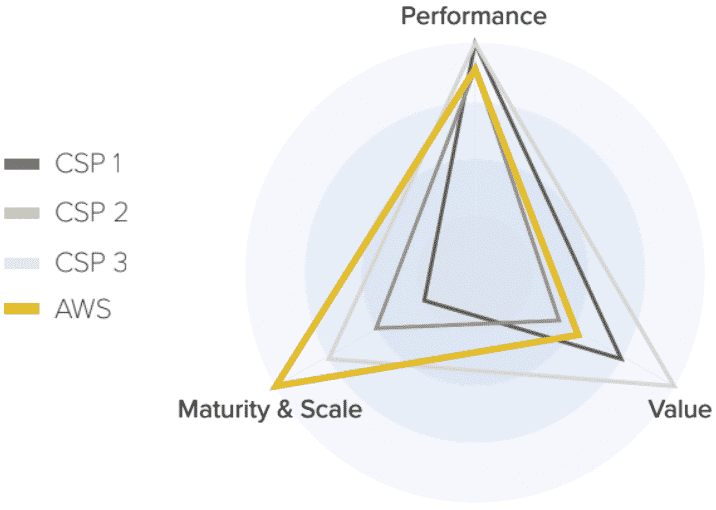

Sample workload where an AWS architecture provides competitive per-core performance and value, with high capacity

Sample workload where an Azure architecture delivers best per-core value and performance